For 25 years, the entire direction of travel in enterprise software was the same: everything moved to the browser. Salesforce on CDs gave way to Salesforce in a tab, Office gave way to Google Docs, Sketch gave way to Figma, and every installer eventually got replaced by a URL. The logic behind that shift was airtight. Zero friction to distribute, one codebase across every operating system, native multiplayer, continuous deployment, and a subscription revenue model that buyers actually preferred. The web won so decisively that even Adobe capitulated to subscription pricing in 2013, and Microsoft declared itself “cloud-first” within 52 days of Satya Nadella taking over in 2014. If you were building software in 2020 and told a VC you were shipping a desktop app, you were laughed out of the room.

And then, somewhere in the last 18 months, every AI-native company that could have stayed browser-only started shipping desktop apps instead.

OpenAI released a ChatGPT Mac app in May 2024, before they had reached feature parity on mobile. Anthropic followed with Claude desktop in November, alongside the Model Context Protocol, which went from around 2 million to 97 million monthly SDK downloads in 16 months. The entire point of MCP is giving AI access to the local filesystem that browsers cannot reach. Perplexity shipped a native Mac app. Cursor, a desktop IDE you download and install the old-fashioned way, is reportedly in talks to raise at a $50 billion valuation, which is roughly the last price tag attached to a desktop-first software company when that company was Microsoft.

Meanwhile Ollama, which exists purely to run AI models locally on your laptop with no API call involved, went from around 100,000 monthly downloads in early 2023 to over 52 million in early 2026. That is a 520x increase in three years for a product whose defining feature is that it does not touch the cloud. And Microsoft, the same Microsoft that was cloud-first, now mandates that every Copilot+ PC ship with 40 trillion operations per second of on-device AI silicon. The company that spent a decade telling its customers to move everything to Azure is now re-engineering consumer PCs around local inference.

The Web Won on Five Things, and AI Wants All Five Reversed

Every piece of enterprise software that moved to the browser did so because the browser offered five structural advantages: zero-friction distribution, SaaS economics, native multiplayer collaboration, cross-device access, and continuous deployment. For most categories, those advantages were decisive. Desktop software only held on in the handful of places where GPU access, filesystem access, offline reliability, or sub-10-millisecond latency were in the critical path. Video editing stayed on the desktop. So did CAD, IDEs, gaming, and anything that needed to push pixels or bits in real time. Those constraints were not ideological. They were physics.

And every serious AI workload happens to sit squarely inside them. AI agents need to read your actual codebase, not whatever you remembered to paste into a chat window. They run for minutes or hours, not the lifetime of a browser tab that the operating system feels free to suspend the moment you switch windows. Meeting copilots need raw screen and audio access that browsers wall off by design, for good security reasons. Voice AI and autocomplete UX fall apart the moment you introduce a network round-trip, which is why Cursor feels instant and most browser-based AI tools feel laggy. The same constraints that kept Premiere on the desktop in 2005 are now shaping the entire AI application layer in 2026, which means for the first time in a generation the list of software categories that have to live on your machine is growing rather than shrinking.

And That Creates a Measurement Problem

Here is where this gets interesting for anyone trying to run an enterprise AI program.

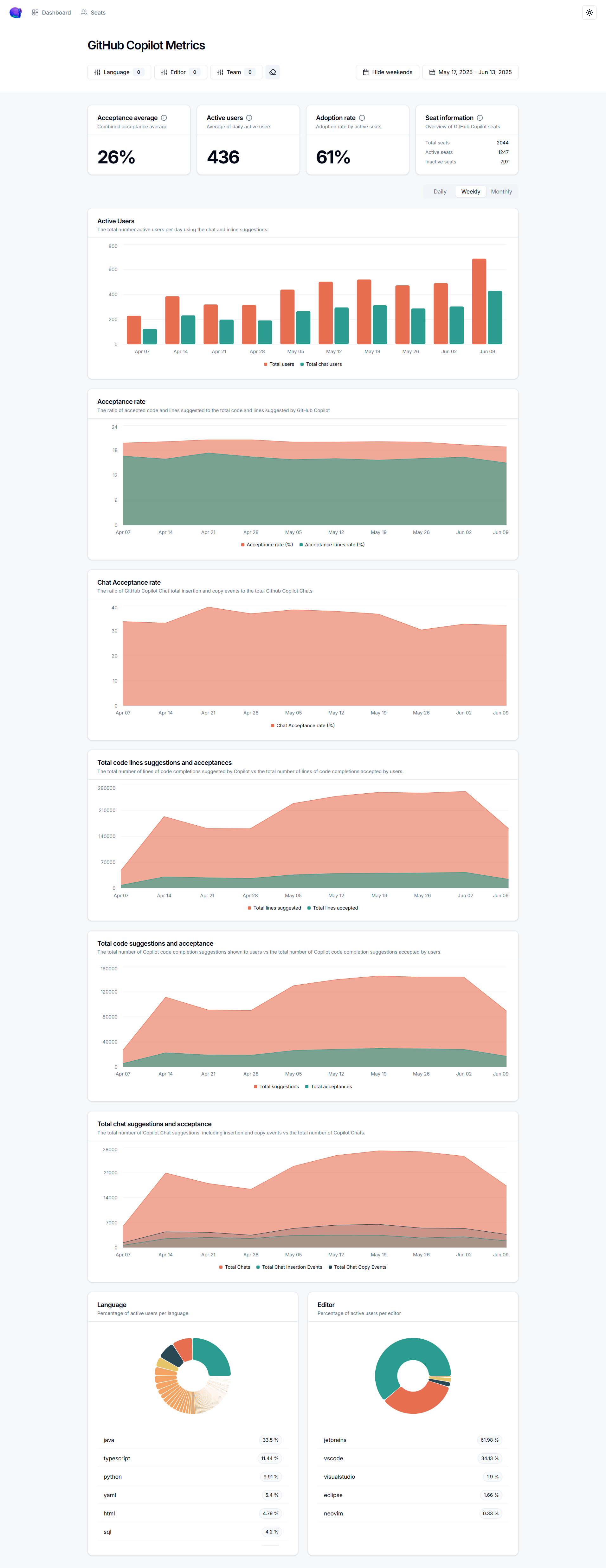

When AI lived in the browser, you could measure it. Your employees logged into ChatGPT through a centralized account, or they used a SaaS tool whose admin console told you exactly who used what and when. Single sign-on, audit logs, API gateway usage reports, the entire governance stack that evolved for SaaS could be pointed at AI with a few configuration tweaks. The web’s centralization was a pain point for vendors in 2000 and a gift to CIOs in 2020. Everything flowed through a known endpoint, and everything left a trace.

The desktop renaissance is dismantling that model, category by category, in a matter of months.

A developer using Cursor is running AI inference against your codebase on their local machine, and your IT team cannot see what they are doing through any centralized log. A knowledge worker using Claude desktop is having conversations with a foundation model that may or may not touch your network. A sales leader using Granola is recording every meeting on their device, with no browser session to inspect. A product team experimenting with Ollama is pulling seventy-billion-parameter models down from Hugging Face and running inference entirely offline, with no API call that your network observability tools can capture. The shadow AI problem that was already keeping CISOs up at night is about to get qualitatively worse, because the new generation of AI tools is specifically engineered to bypass the centralized chokepoints that corporate governance depends on.

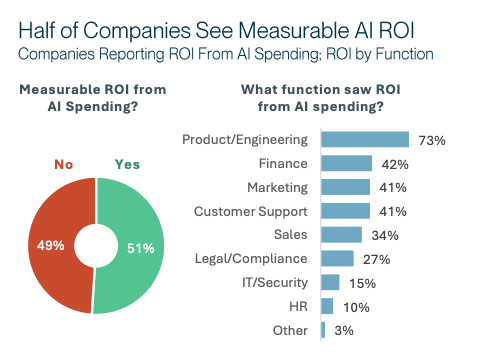

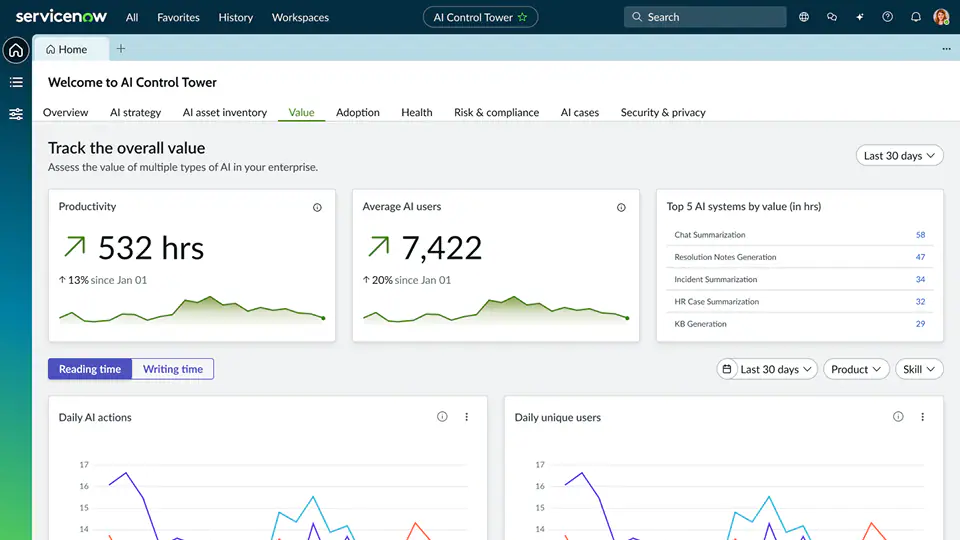

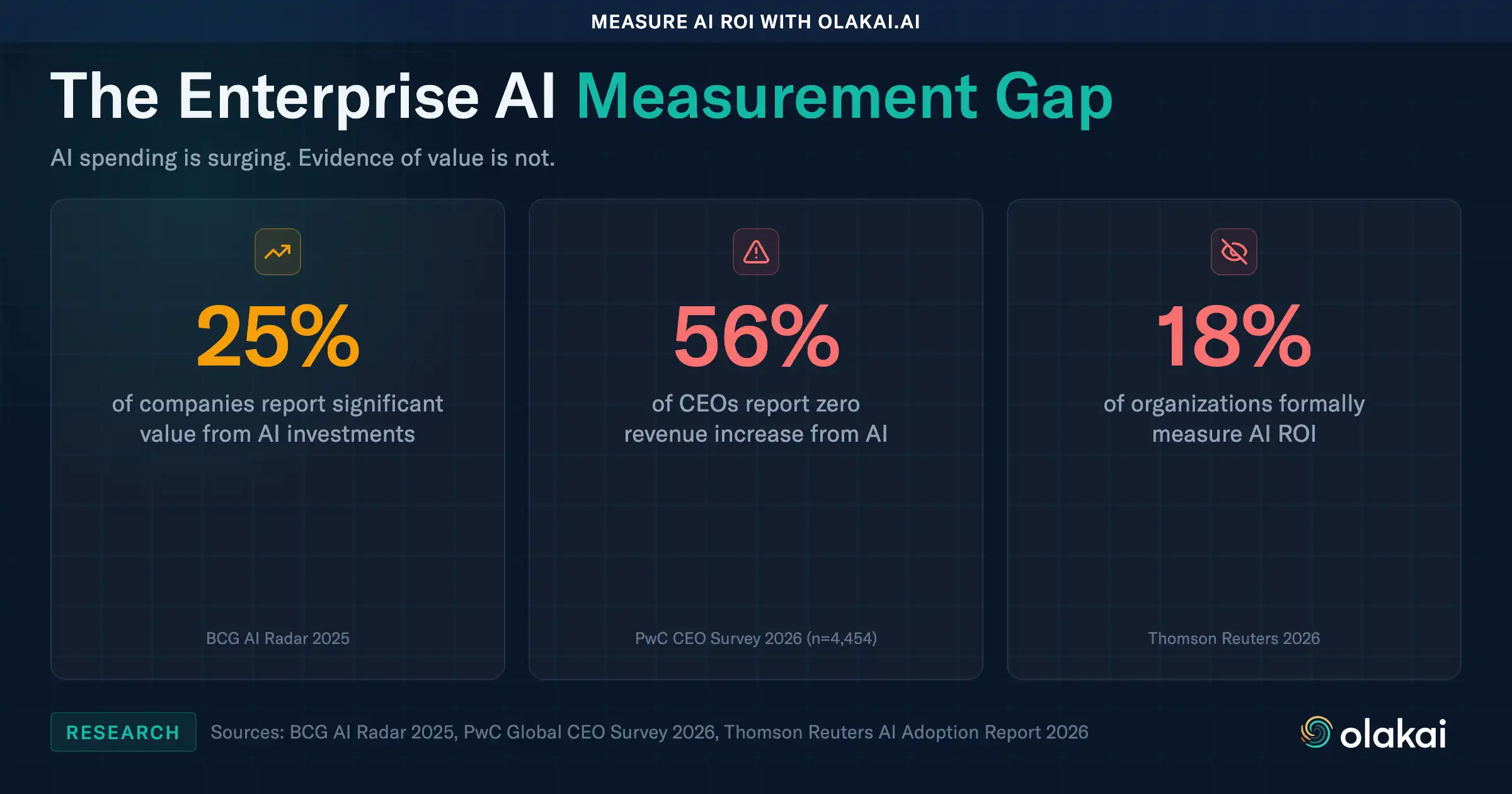

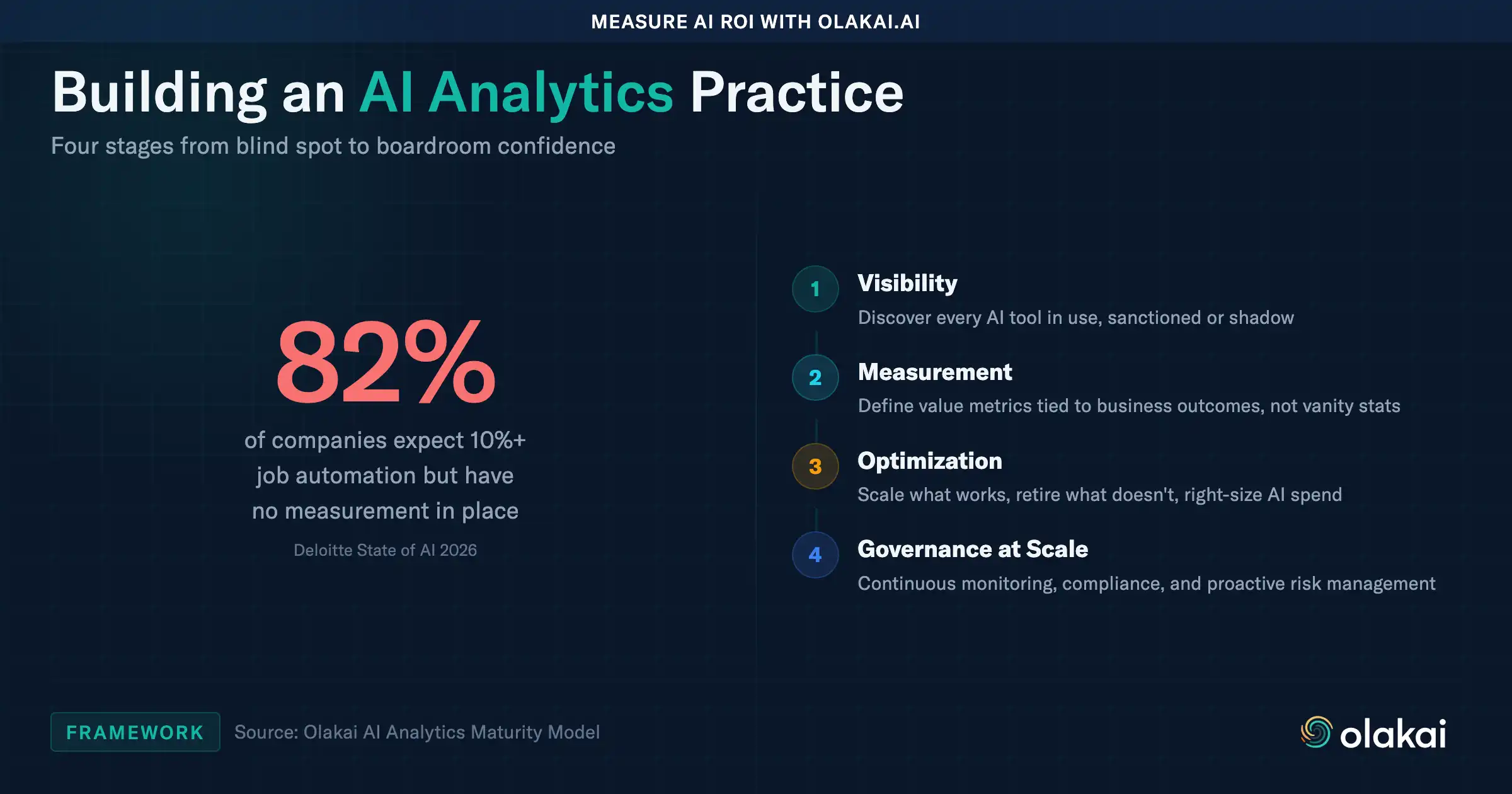

You cannot measure what you cannot see, and you cannot govern what you cannot measure. The measurement gap that enterprises are already struggling to close in their AI ROI programs is about to widen significantly, precisely at the moment when boards and CFOs are starting to demand proof of value.

What the Old Playbook Got Wrong

For years, the default AI governance playbook at most enterprises has been some version of: restrict access to sanctioned tools, route traffic through an approved gateway, and generate usage reports from the gateway logs. That playbook works reasonably well when the AI tool in question is a cloud-hosted chatbot that an employee reaches through a browser. It falls apart the moment the AI tool is a desktop app that talks directly to a foundation model provider, or worse, runs inference on the laptop itself.

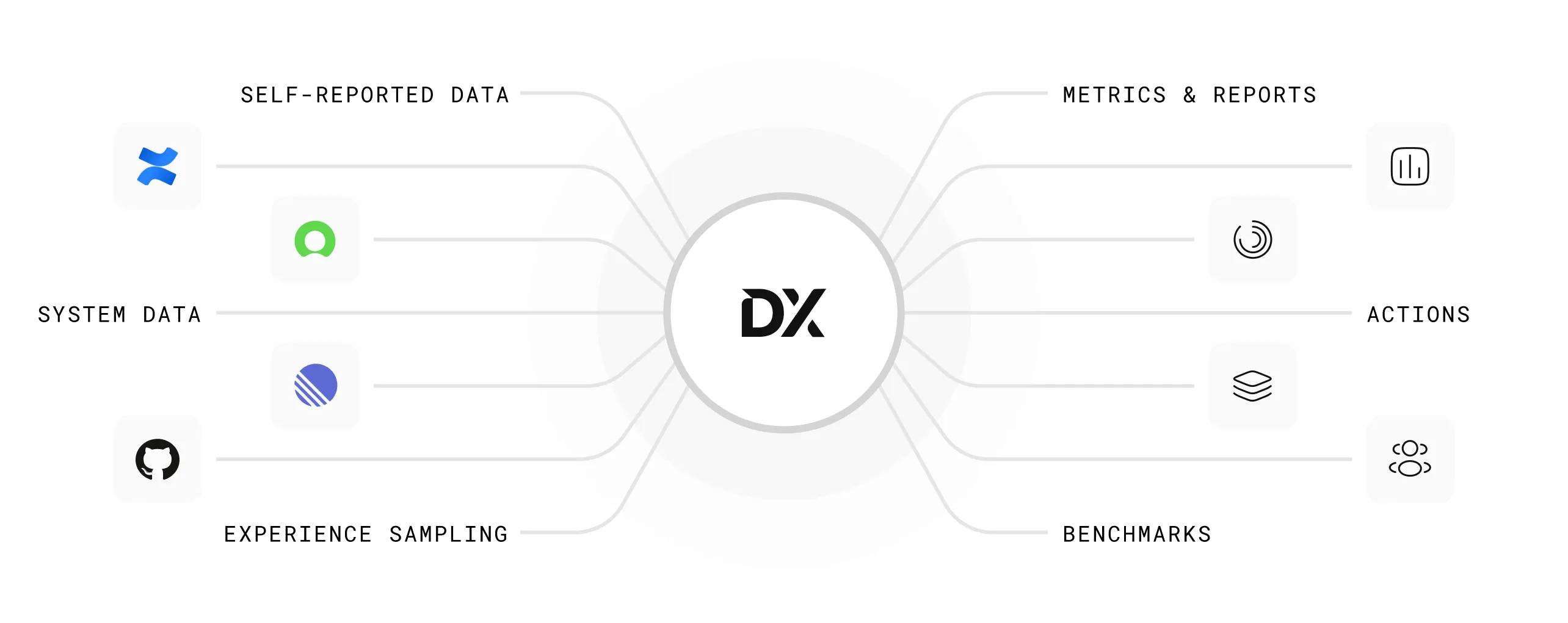

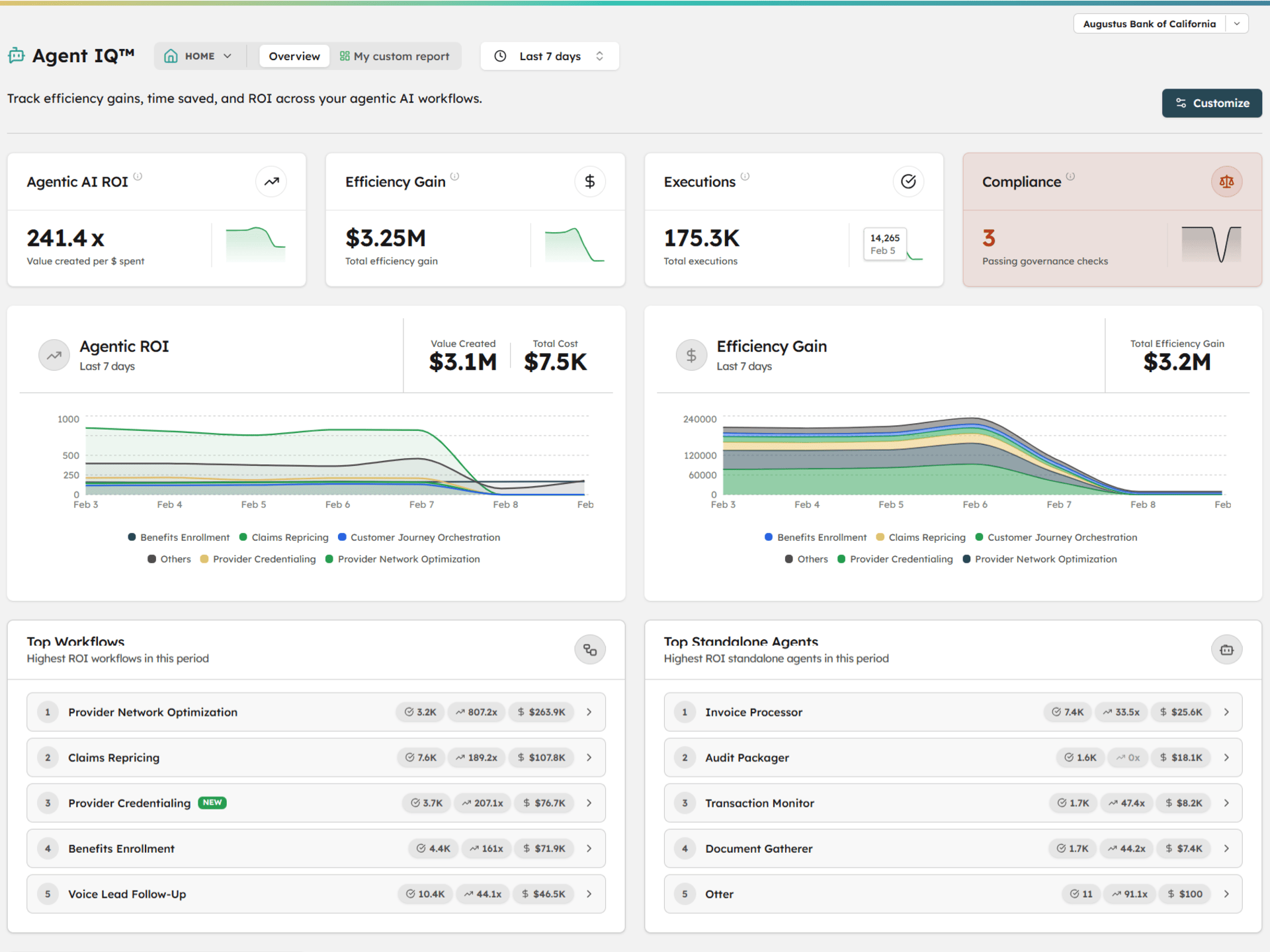

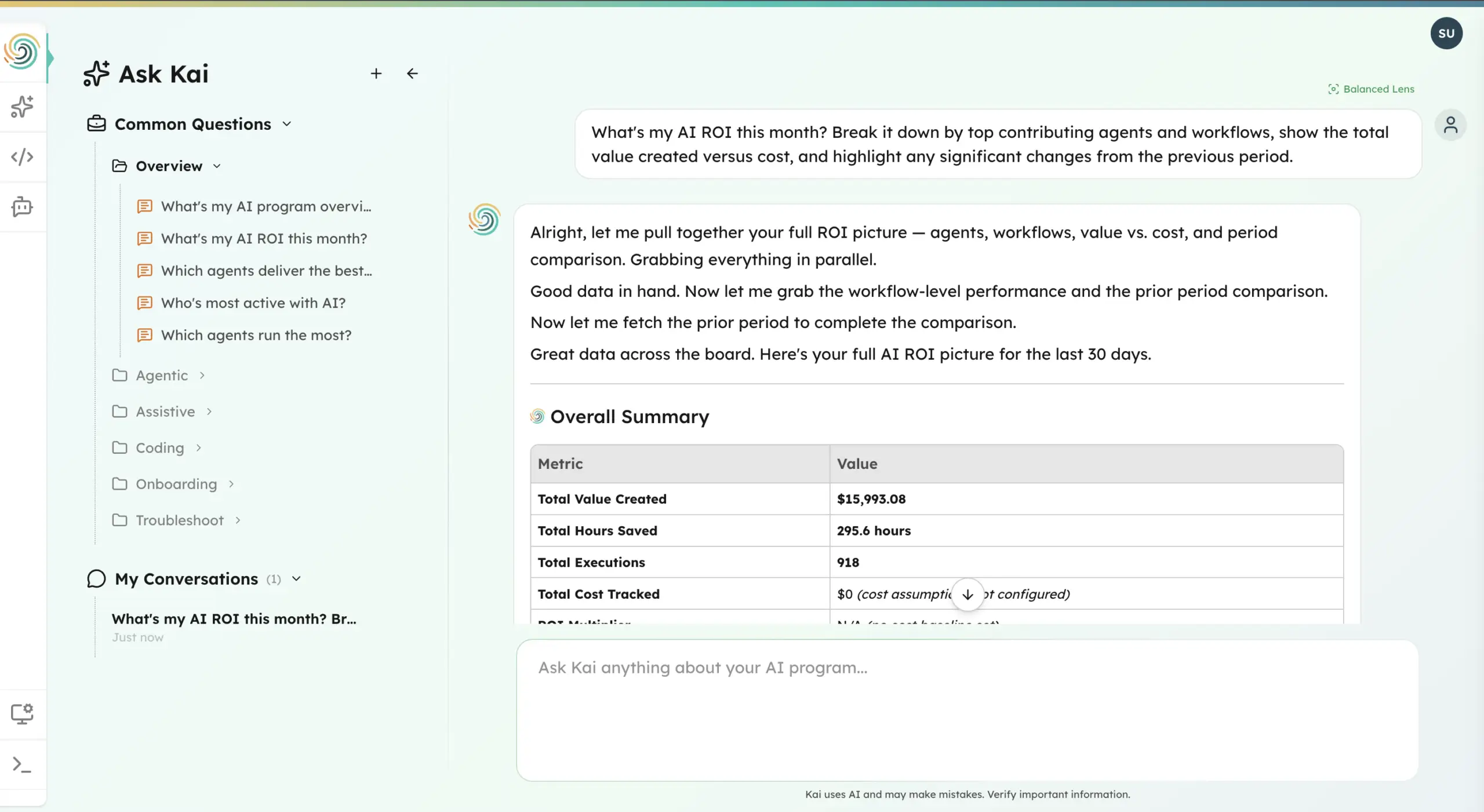

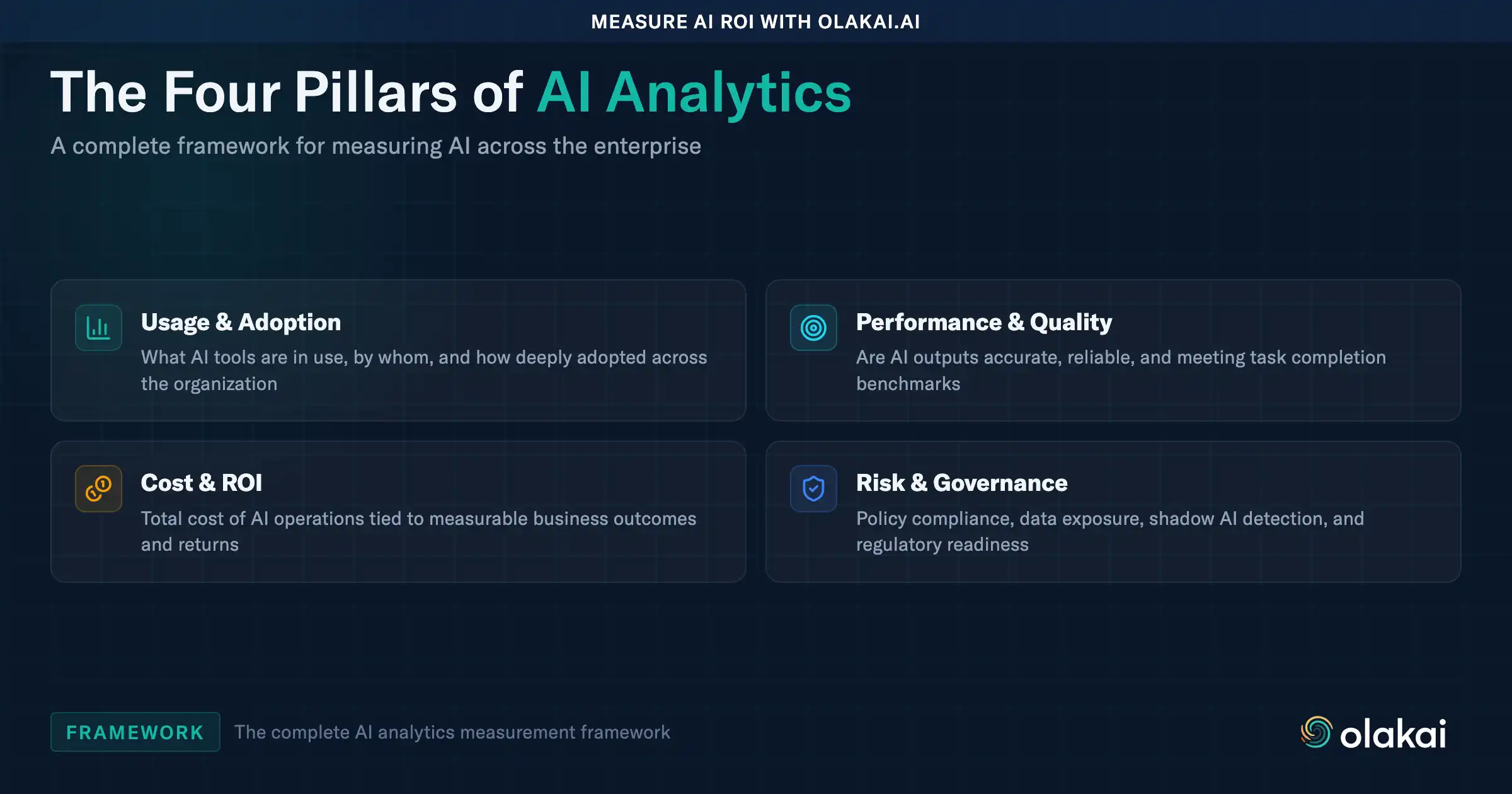

The uncomfortable truth is that measurement and governance in an AI-first enterprise cannot be built from the network layer or from SaaS admin consoles alone. You need a visibility layer that works across cloud, browser, desktop, and local-inference environments, and that treats each AI interaction as an observable event regardless of where the compute happened. You need metrics that CFOs actually want to see rather than vanity counts of API calls. And you need a governance model that assumes AI usage is heterogeneous and distributed by default, not centralized and inspectable by default.

What Leaders Should Do Now

The shift to AI-native desktop is not a reason to panic, and it is not a reason to try to block desktop AI apps. Every serious study on enterprise AI adoption points to the same conclusion: knowledge workers will use the tools that make them productive, and the companies that lean into that rather than fighting it capture disproportionate value. The question is not whether to allow your teams to use Cursor and Claude and Ollama. The question is whether you can see enough of what is happening across all of them to understand the true ROI of agentic AI, catch governance failures before they become incidents, and make informed decisions about where to invest next.

That starts with accepting that your AI measurement layer needs to extend into the desktop, the IDE, and the on-device inference runtime, not just the browser. It continues with building unified AI analytics that aggregate events from across environments into a single view. And it ends with a governance model that is resilient to heterogeneity, because the direction of travel for the next five years is more AI, in more places, running on more devices, against more models, not less.

The desktop is back. The browser is not going away. Most enterprises will run both, permanently. The organizations that win will be the ones that can see across both, measure across both, and make decisions grounded in that visibility.

If you are thinking about how to build that measurement layer inside your organization, we would love to talk.