A CFO recently told us she received an AI progress report from her technology team. It showed 92% employee adoption, 10,000 daily prompts, 4.3 out of 5 user satisfaction, and 99.7% uptime. She looked at it for thirty seconds and asked one question: “How much revenue did this generate?” The room went quiet.

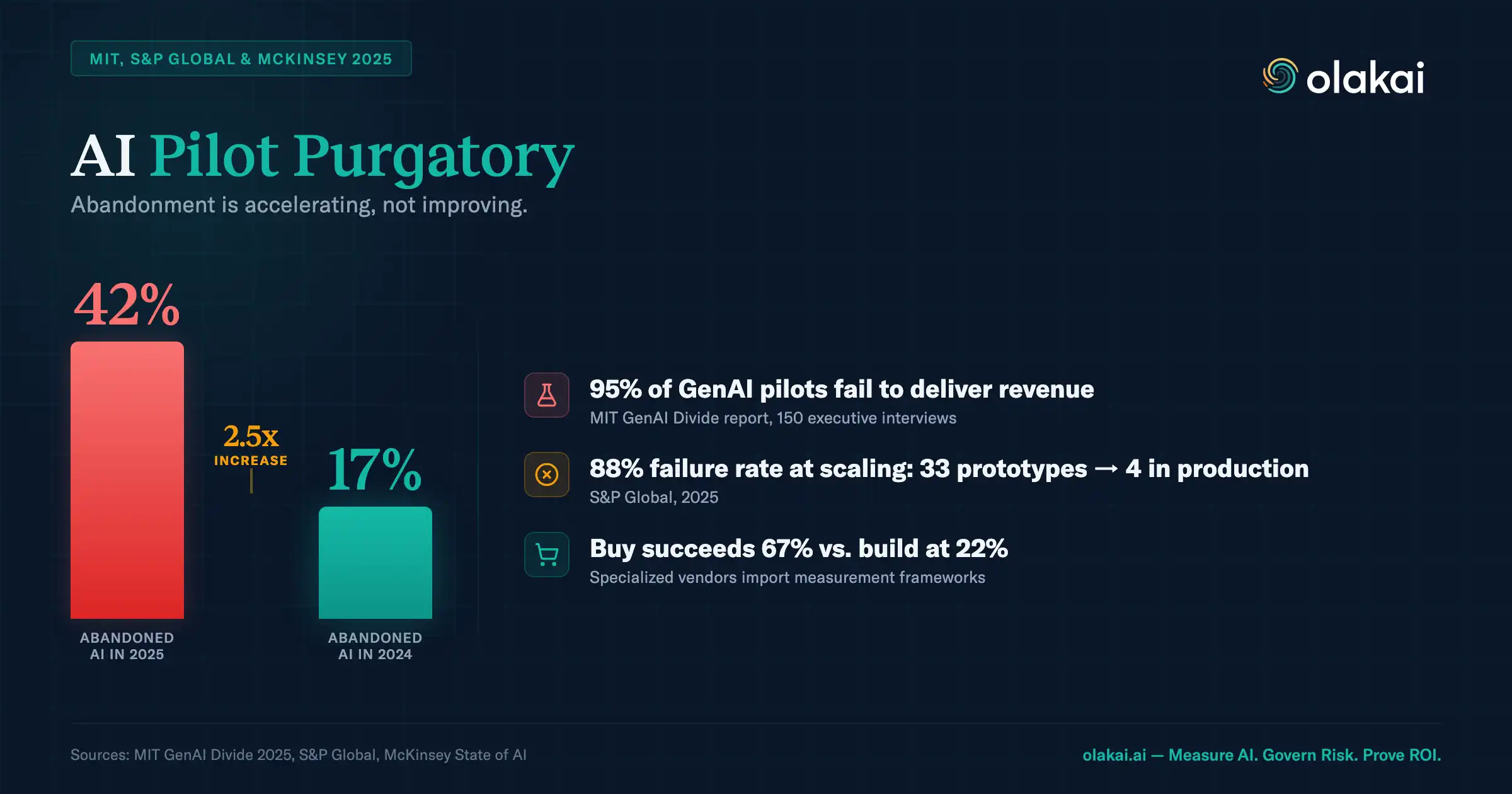

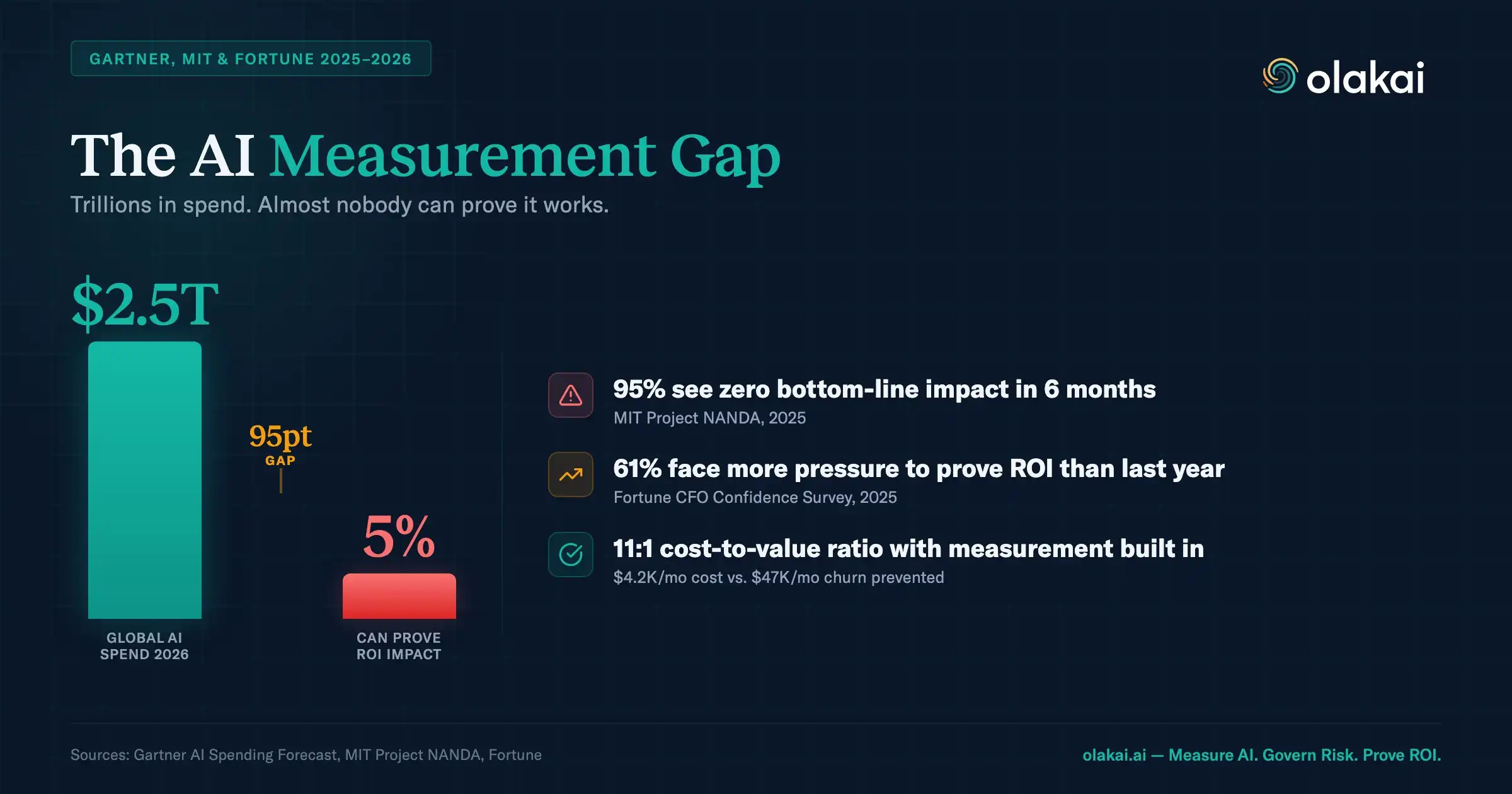

That silence is playing out in boardrooms everywhere. McKinsey’s State of AI research found that fewer than 20% of enterprises track defined KPIs for their generative AI initiatives. Not 20% track them well — 20% track them at all. Yet tracking those KPIs is the single strongest predictor of whether AI delivers bottom-line impact.

This is the MEASURE problem — the second step in the SEE, MEASURE, DECIDE, ACT framework. (This is the second of four companion deep-dives — see also SEE, DECIDE, and ACT.) Once you can see what AI is running across your organization, the next challenge is measuring what actually matters. And what matters to the CFO is almost never what technology teams measure first.

The Metrics Theater Problem

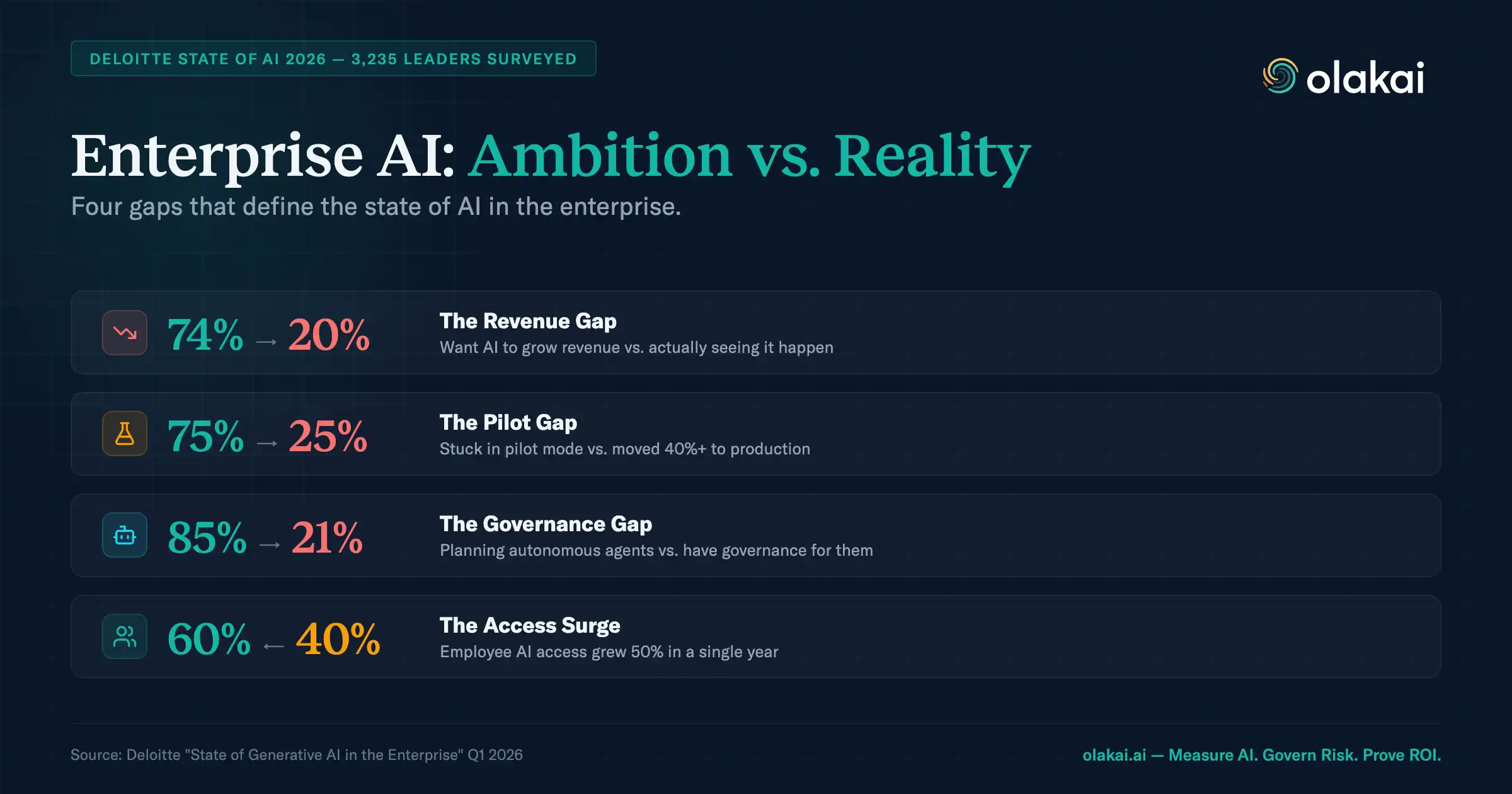

Eighty-seven percent of CFOs say AI will be extremely or very important to finance operations in 2026, according to Deloitte’s CFO Signals survey. They’re allocating budget accordingly — tech spending on AI is expected to rise from 8% to 13% of total technology budgets over the next two years. Yet only 21% of active AI users report that AI has delivered clear, measurable value.

The problem isn’t that AI fails to deliver value. It’s that organizations measure the wrong things. They track adoption rates, session counts, and user satisfaction — metrics that answer “are people using AI?” but not “is AI making us money?” IBM found that 79% of organizations see productivity gains from AI, but only 29% can measure ROI confidently. The productivity is real. The measurement isn’t.

This creates what we call metrics theater: impressive dashboards full of activity data that tell a compelling adoption story but can’t answer a single P&L question. The CFO doesn’t care that 10,000 prompts were submitted yesterday. She cares that the customer success team’s AI-assisted response time dropped from 4 hours to 45 minutes, which reduced churn by 12%, which saved $2.3 million in annual recurring revenue. That’s the same data, measured differently — and only the second version survives a board meeting.

Vanity Metrics vs. Value Metrics

The distinction matters because it determines what gets funded. When you present vanity metrics, the board sees cost without context. When you present value metrics, the board sees investment with returns.

Vanity metrics tell you AI is being used. They include adoption rate (percentage of employees who have logged in), volume metrics (prompts submitted, queries processed, tokens consumed), technical performance (latency, accuracy, uptime), and user sentiment (satisfaction surveys, NPS from internal users). These metrics matter to engineering teams managing infrastructure. They are meaningless to the people who control the budget.

Value metrics tell you AI is producing outcomes. They include revenue impact (deals influenced, leads converted, upsell driven by AI recommendations), cost reduction (hours saved multiplied by fully loaded labor cost, infrastructure cost avoided, error remediation reduced), risk metrics (compliance incidents prevented, data exposure avoided, audit findings reduced), and time-to-outcome (cycle time compression, faster time to market, reduced mean time to resolution).

McKinsey’s research is unambiguous on this point: organizations that tie AI to specific business KPIs are significantly more likely to report EBIT impact than those that track only usage. The metric itself isn’t what drives results — the discipline of connecting AI activity to business outcomes is what drives results.

What CFOs Actually Want to See

After working with finance leaders across industries, the requests cluster into four categories:

Hard ROI — dollars in, dollars out. CFOs want to see the investment (AI tooling costs, infrastructure, implementation, training) alongside the return (labor cost reduction, operational efficiency gains, revenue influenced). Not estimates. Not projections based on “time saved.” Actual financial impact traced to specific AI initiatives. This is where most enterprises fall short, because connecting AI activity to downstream financial outcomes requires measurement infrastructure that most organizations haven’t built.

Portfolio view — which bets are paying off. CFOs don’t manage single projects. They manage portfolios. They want to see all AI investments side by side: cost-to-value ratio by use case, department, and AI tool. Which of the fifteen AI initiatives running across the organization are generating returns? Which should be scaled? Which should be sunset? Without this portfolio view, every budget conversation becomes a case-by-case negotiation instead of a strategic allocation.

Risk-adjusted returns — the full picture. Revenue and cost savings are only part of the equation. CFOs also need to see the risk profile of AI initiatives: compliance exposure, data security incidents, governance gaps. An AI agent that saves $500,000 annually but creates unquantified regulatory risk isn’t necessarily a good investment. The metric that matters is risk-adjusted return — and that requires integrating governance data with performance data.

Forward-looking indicators — where to invest next. Historical ROI data is table stakes. CFOs want leading indicators: which AI capabilities are showing early traction? Where are adoption curves steepest? Which teams are seeing productivity gains that haven’t yet translated to financial outcomes but will? The World Economic Forum found that AI ROI payback typically takes 2-4 years — far longer than the 7-12 months expected for typical technology investments. Leading indicators help CFOs maintain investment conviction during that gap.

Why Technical Metrics Don’t Predict Business Outcomes

There’s a persistent assumption in enterprise AI that better technical performance equals better business results. It rarely does.

An AI model can have 99% accuracy and deliver zero business value — if it’s solving a problem nobody cares about. An AI agent can process 50,000 queries per day with sub-second latency and produce no measurable revenue impact — if those queries don’t connect to business workflows that generate outcomes. MIT’s research found that 95% of generative AI pilots technically succeed but yield no tangible P&L impact. The technical metrics are green. The business impact is zero.

This disconnect exists because technical metrics measure the AI system’s performance, not its contribution. Accuracy, latency, throughput, and error rates tell you whether the model is working correctly. They don’t tell you whether it’s working on the right things, for the right people, in the right workflows, at the right time.

The enterprises that prove AI ROI measure both — but they lead with business outcomes and use technical metrics as diagnostic tools. When revenue impact declines, they look at technical metrics to diagnose why. When accuracy drops, they assess whether it affects a high-value workflow or a low-impact one. The hierarchy matters: business outcomes first, technical metrics in service of understanding those outcomes.

The MEASURE Step: Building Your AI Scorecard

The MEASURE step in the SEE, MEASURE, DECIDE, ACT playbook translates these principles into a practical framework. It starts with three requirements:

Baselines before AI. Without a baseline, you’re reporting output, not impact. What was the metric before AI? If a customer support agent reduces average handle time, what was the average handle time before the agent was deployed? If an AI tool accelerates document review, how long did review take manually? Baselines establish the counterfactual — the “what would have happened without AI” that separates real impact from activity.

Attribution models. AI rarely operates in isolation. When revenue increases after deploying a sales AI tool, how much of that increase is attributable to AI versus seasonal trends, marketing campaigns, or pricing changes? Attribution isn’t perfect, but it’s necessary. Even a directional attribution model (comparing teams with AI to teams without, or measuring pre/post performance in the same team) is better than claiming all improvement for AI.

Time horizons that match the business cycle. A lead generation AI doesn’t show revenue impact in week one. It shows impact when those leads close — which in enterprise B2B might be 90 to 180 days later. A compliance AI doesn’t show risk reduction until the next audit cycle. Measuring AI ROI on a monthly sprint cadence misses outcomes that operate on quarterly or annual timelines. CFOs understand long payback periods. They don’t accept unmeasured ones.

The result is a balanced AI scorecard: one to two business outcome metrics (the value metrics that appear in board presentations), one to two operational metrics (the efficiency indicators that show how AI is performing), and governance metrics (risk indicators that ensure AI operates within acceptable boundaries). This isn’t about tracking more metrics. It’s about tracking the right ones — and presenting them in the language your CFO speaks.

Getting Started

If you’re tracking AI adoption but not AI outcomes, start with three steps. First, identify the three to five business KPIs that your CFO or board reviews quarterly. Second, map each AI initiative to the KPI it should influence — if an AI initiative can’t be mapped to a business KPI, that’s a signal worth examining. Third, instrument measurement: establish baselines, deploy tracking, and commit to a review cadence that matches your business cycle.

The 20% of enterprises that prove AI revenue impact aren’t using more sophisticated models. They’re using more sophisticated measurement. They defined what success looks like in financial terms before deploying AI, and they built the instrumentation to prove it. That discipline — not better technology — is what separates the organizations scaling AI from the organizations stuck explaining adoption dashboards to skeptical boards.

Olakai’s custom KPI tracking lets you define the business metrics that matter and connect them to AI activity in real time. And Future of Agentic’s KPI library provides ready-made metric templates by use case, so you don’t have to start from scratch.

Ready to move beyond adoption dashboards? Talk to an expert and we’ll show you how enterprises connect AI usage to the business metrics their CFOs actually want to see.