For VPs of Engineering

Prove every AI coding tool’s ROI. Standardize with confidence.

Your engineers are using Claude Code, Cursor, GitHub Copilot, Windsurf, OpenAI, Codeium, and Sourcegraph Cody — sometimes all of them. Procurement asks “is this paying off?” You don’t have data. Olakai reads your GitHub data directly and gives you the answer in cycle-time deltas, not vendor anecdotes.

Every coding tool sells you adoption. None of them sell you outcome.

Cursor shows you Cursor adoption. GitHub shows you Copilot acceptance rates. Anthropic shows you Claude Code spend. None of them connect that activity to the only number procurement actually cares about — whether your engineers are shipping faster. Olakai connects them. End to end. From every PR.

What you get with Olakai

Cycle time impact, by tool

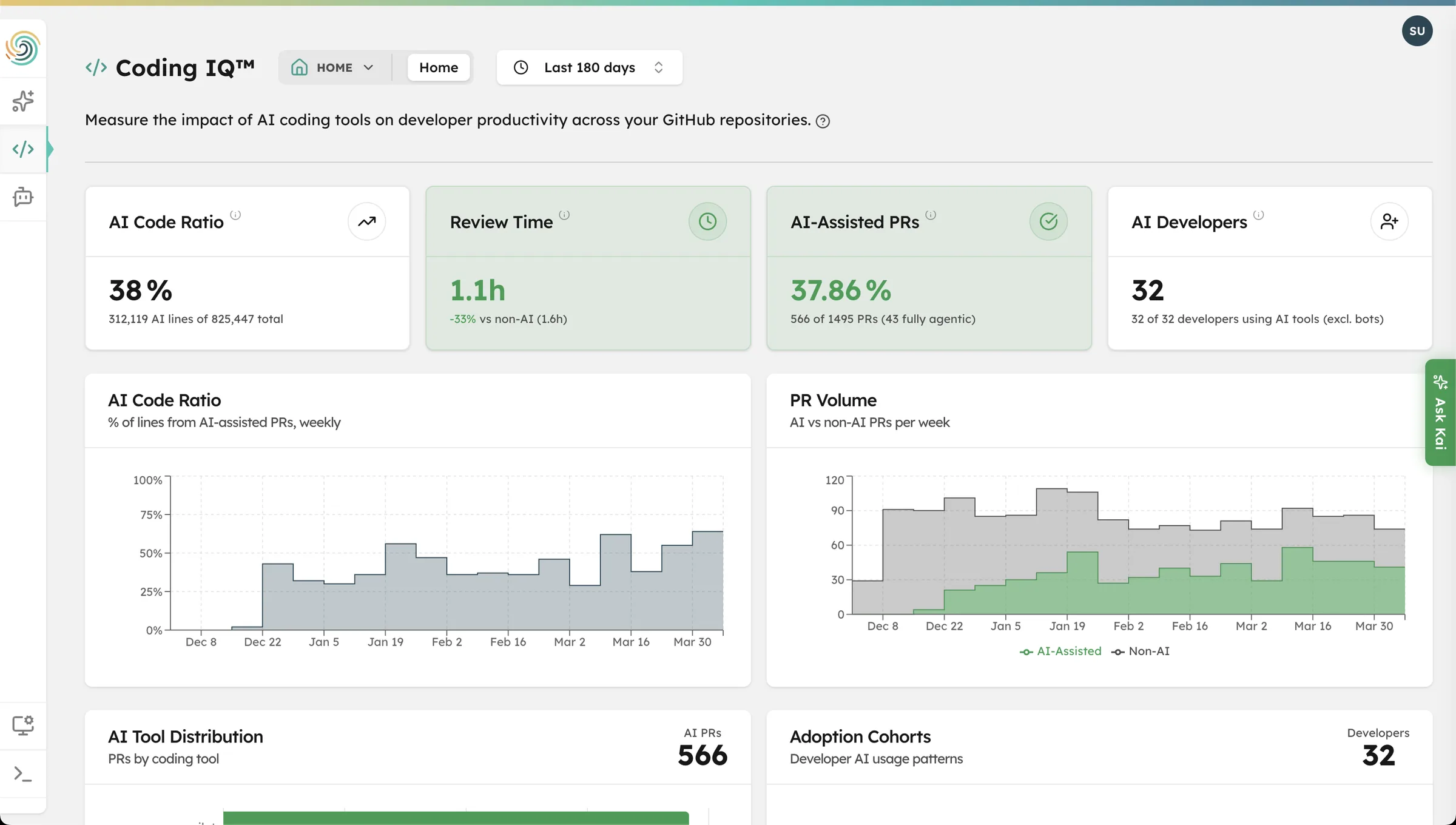

Cycle time delta for AI-assisted vs non-AI PRs, broken out by every provider you run. Coding time, review time, total cycle. The exact metric procurement asks you for — backed by your real GitHub data. See Coding IQ.

Adoption coaching

Every developer in your org segmented into Power, Casual, New, or Idle cohorts — with the data you need to coach the casual users, reclaim the idle licenses, and standardize on what your power users have already chosen.

Cost per PR, by provider

Daily cost imports from Anthropic, Cursor, GitHub, Windsurf, OpenAI, Codeium, and Sourcegraph. Divided by PRs produced. Compared across teams. The single number that tells you which tool to standardize on next quarter.

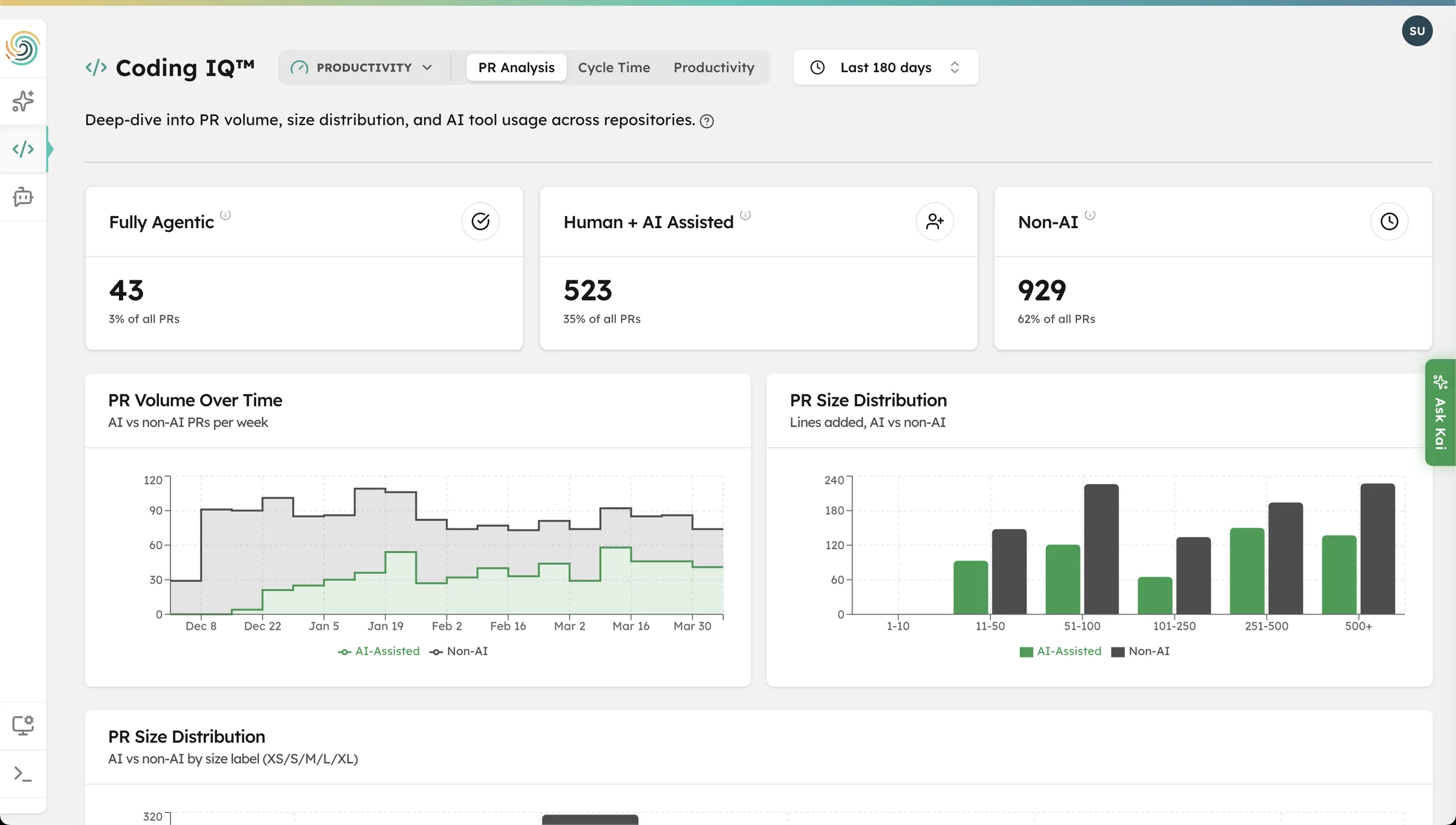

PR Analysis

See exactly how much faster AI-assisted PRs ship.

Coding IQ reads PR data directly from your GitHub org and plots cycle time for AI-assisted vs non-AI PRs side by side — across every repo, every team, every provider. AI-assisted PRs are typically 25–40% faster. Coding IQ tells you whether yours are.

See your team’s Coding IQ data in 2 minutes.

We’ll send you a Magic Link to a live Coding IQ environment with realistic engineering data — cycle time delta, adoption cohorts, cost per PR across providers, and Kai ready to answer your tool-comparison questions. No login. No setup.