Here’s a scenario we’ve watched play out more than once. A CIO asks his team a simple question: how many AI tools are we paying for right now? He gets a clean answer, a few dozen on the procurement list, with a combined spend that’s easy to defend in a board meeting. Then his company deploys a browser extension across the organization. Within 48 hours, the real answer arrives. Not dozens. Hundreds. Most of them free, most of them nowhere near the procurement list, and almost none of them visible to the security team.

That gap between what leadership knows about and what employees actually use is the shadow AI problem. Most conversations about it focus on the threat, and the threat is real. But there’s another side of shadow AI that almost nobody talks about, and it’s the reason the CIO in that story spent the next month rethinking his entire AI procurement strategy. Shadow AI is the most honest data you have about what your teams actually want from AI. Used right, it’s a procurement gift wrapped in a security wrapper.

The number that should worry you (and also excite you)

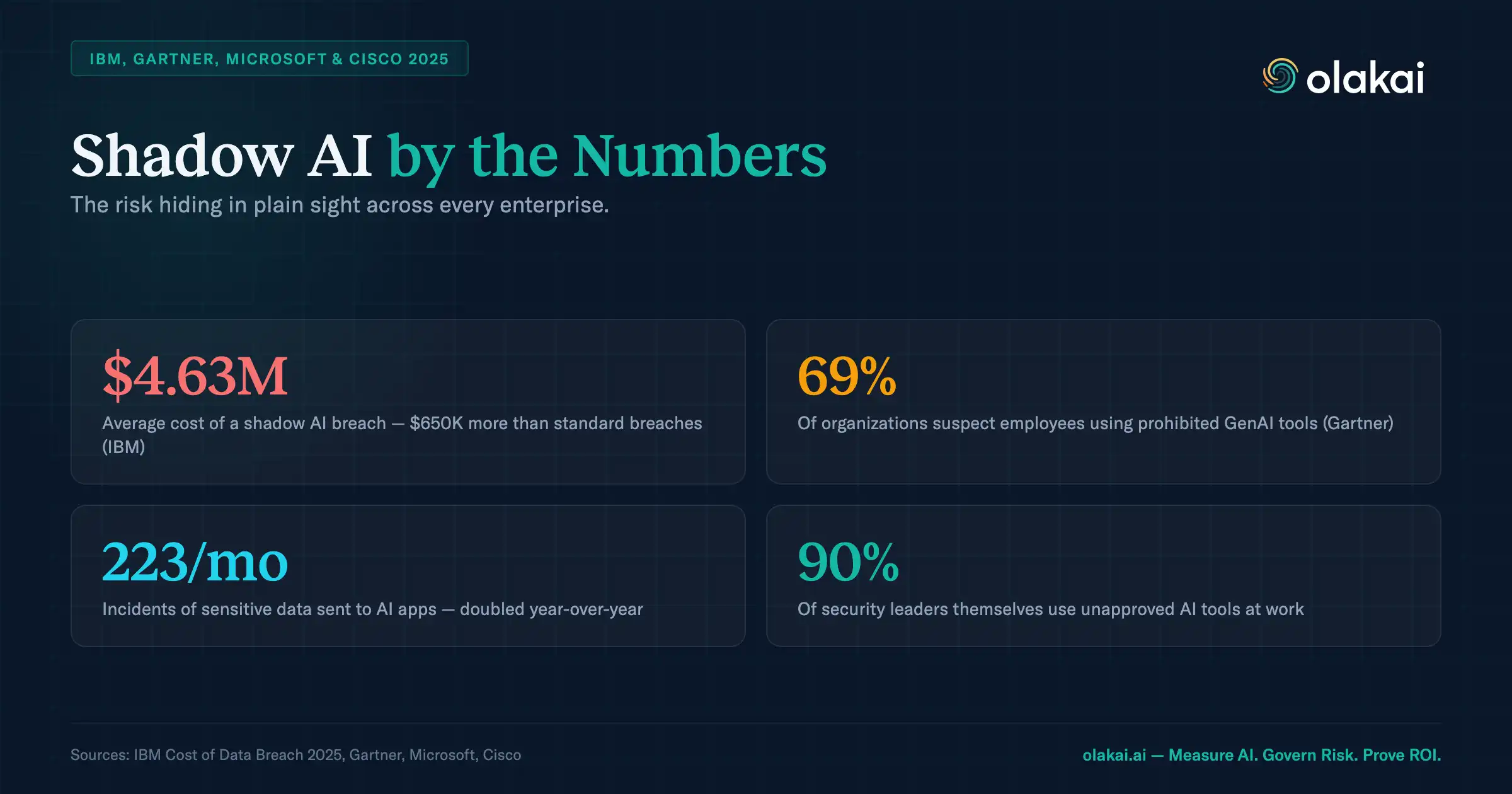

Across the enterprise deployments we’ve analyzed, roughly 60% of AI usage happens outside IT-sanctioned channels. Employees paste customer data into free ChatGPT tabs, upload financial models to AI summarizers they found on Product Hunt, and run code through coding assistants the CAIO has never heard of. The security risk is obvious. What gets less airtime is what that 60% is telling you about your workforce. Six out of every ten AI interactions happening in your company right now represent a demand signal, an employee who needed a tool badly enough to go find one, bypass procurement, and keep using it until it became habit. That isn’t only a risk. It’s research.

Prohibition has never worked

Every security team’s first instinct, when they see the shadow AI scale for the first time, is to ban it. Block the URLs. Rewrite the acceptable use policy. Send a firmwide email. We’ve watched this happen a dozen times, and the result is always the same. Employees route around the block. They switch to mobile devices. They email documents to personal accounts and run them through free tools at home. They find three alternatives for every one you block, because the tools genuinely help them work faster, and no policy has ever beaten a productivity gain you can feel within an hour.

The answer is not prohibition. It’s visibility and governance. You need to know what is being used, by whom, with what data, and whether it complies with your policies. Then you need to decide, tool by tool, whether to sanction it, route it through an approved version, or block it with a replacement in hand. That’s governance. Prohibition is policy theater.

What shadow AI is actually telling you

Here’s the part of the conversation nobody has. Your sanctioned AI stack tells you what your leadership team decided. Shadow AI tells you what your people reached for when nothing was stopping them. That second data set is almost always more useful than the first.

When you can see every AI tool in use across the organization, ranked by department, frequency, and data sensitivity, you start noticing patterns that rewrite your procurement plan. The $40,000-a-year tool legal bought in Q2 has less weekly usage than a free alternative two analysts discovered on their own. Marketing adopted a writing assistant that nobody approved, and campaign velocity has quietly doubled. Finance is using three different AI tools that overlap almost completely, a consolidation opportunity that pays for itself in a month. Shadow AI isn’t a failure of policy. It’s a heat map of real demand.

This is the reframe that changes the budget conversation. McKinsey’s State of AI has tracked enterprise AI adoption climbing faster than any other software category in a decade, but almost every organization we talk to can’t tell you where the value inside that adoption is actually landing. Shadow AI is where a lot of that value is landing, and it’s where most of the buying signals you’re missing already live.

What Olakai does about it

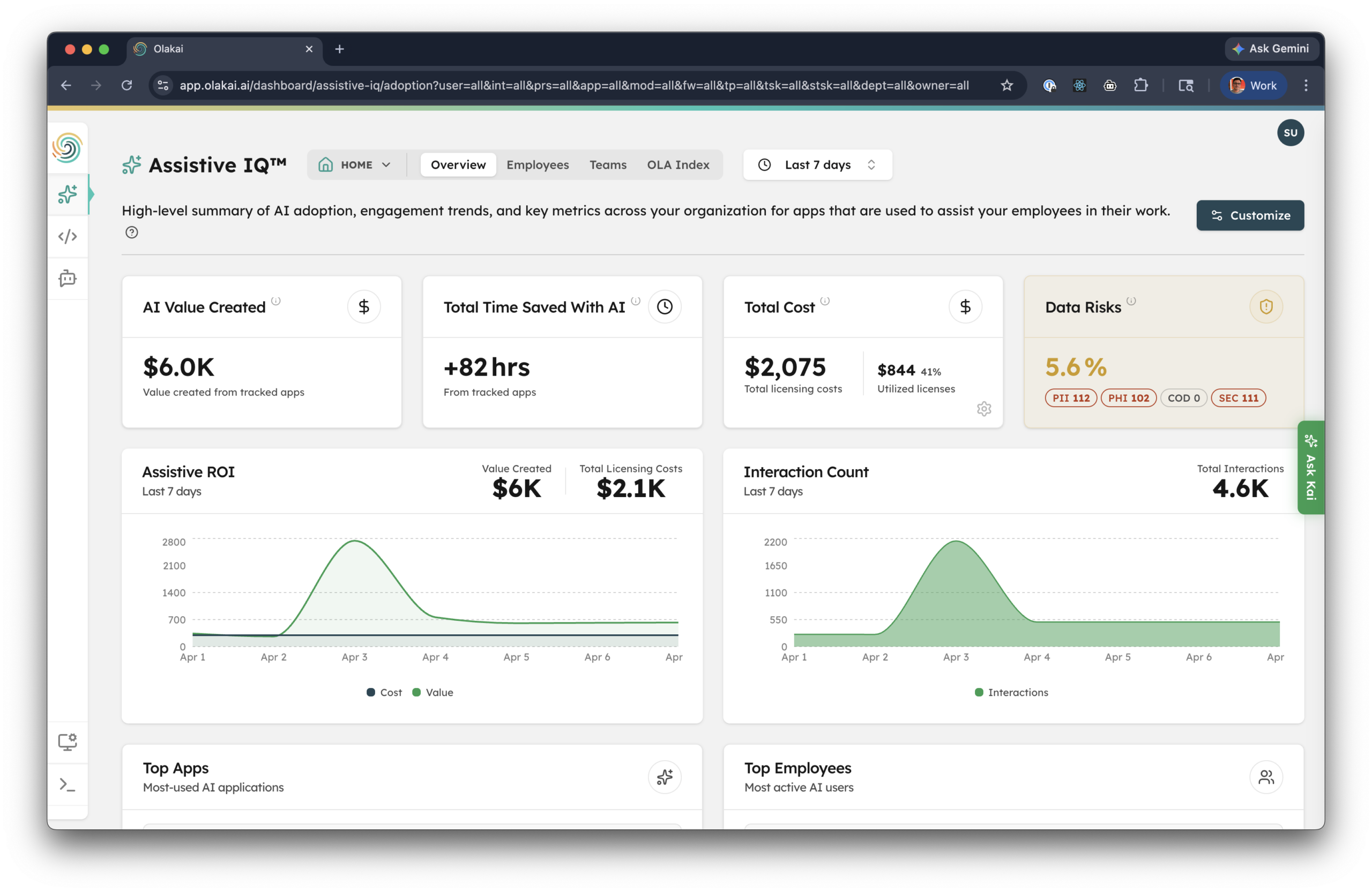

We built Olakai’s shadow AI capability around three steps: discover, govern, and report. Discovery starts with a lightweight browser extension that identifies over 600 AI tools the moment an employee starts using one. It doesn’t require SSO integration, it doesn’t require expense report reconciliation, and it catches the tools that would never show up in either. Within days, you have a complete inventory of every AI tool your organization is actually using, mapped to departments, users, and usage patterns.

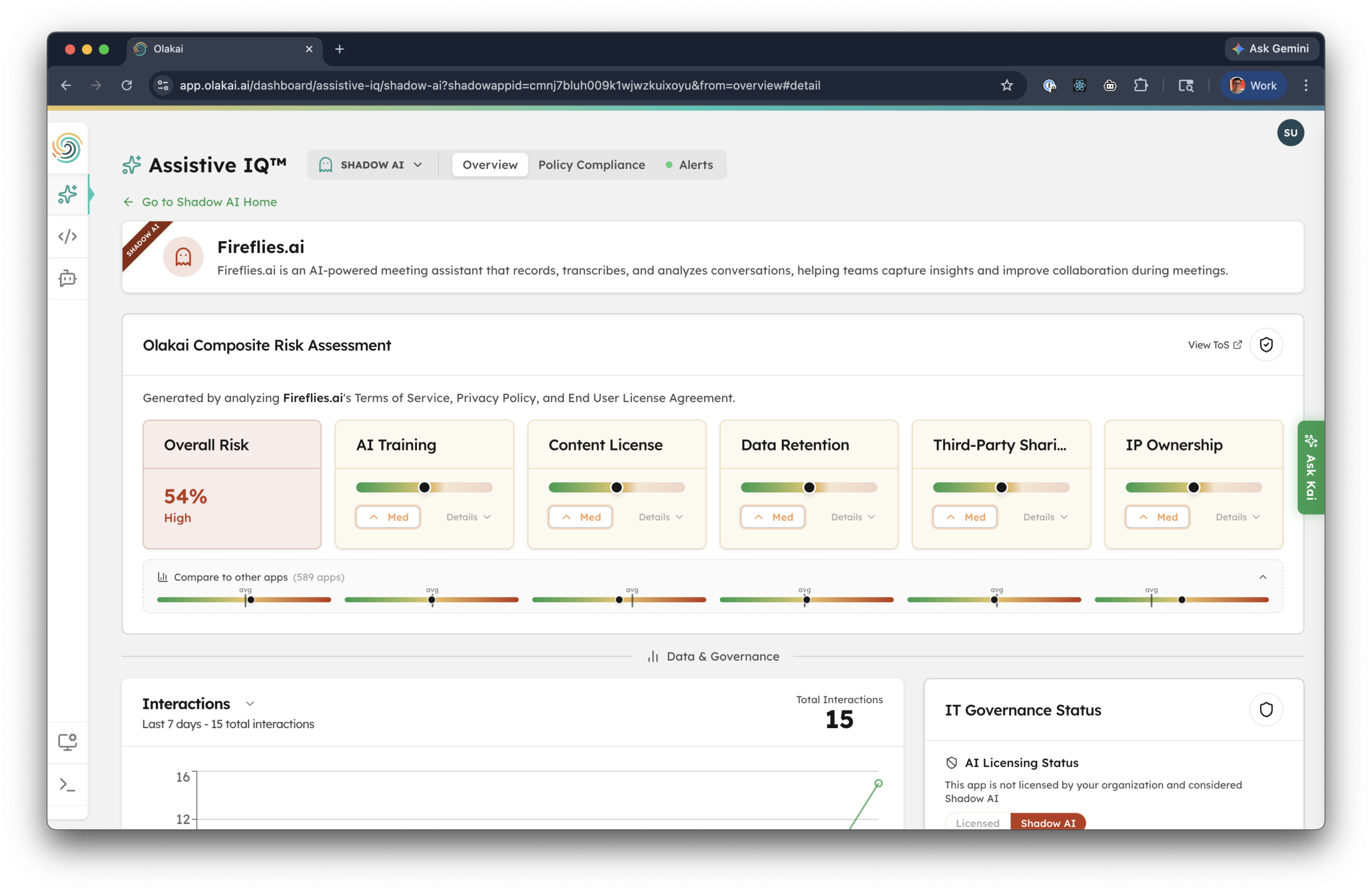

Governance is where the threat and the opportunity both get resolved. Olakai ranks each tool by risk surface: which ones are processing PII, which departments are most exposed, which specific prompts contain sensitive data. You set policies once, and they enforce automatically. Real-time data loss prevention catches regulated data before it leaves the building. Approved tools keep running. High-risk ones get blocked with a replacement suggested in the same breath. Your security team stops playing whack-a-mole and starts making decisions backed by real usage data.

Reporting closes the loop. Every interaction, every tool, every policy decision becomes an audit trail you can export for compliance reviews. When an auditor asks what AI tools your finance team used in Q3, you don’t send an IT admin to pull logs from five different admin consoles. You export one report.

The part your CFO will actually care about

This is the piece of the shadow AI conversation that usually gets left out, and it’s the piece that pays for the platform in the first quarter. Every AI tool you’re sanctioning charges by the seat. Every enterprise over-provisions. Assistive IQ shows you exactly which licenses are sitting idle, by tool, by team, by user, and gives you a precise reclaim list you can hand to procurement before the next renewal cycle.

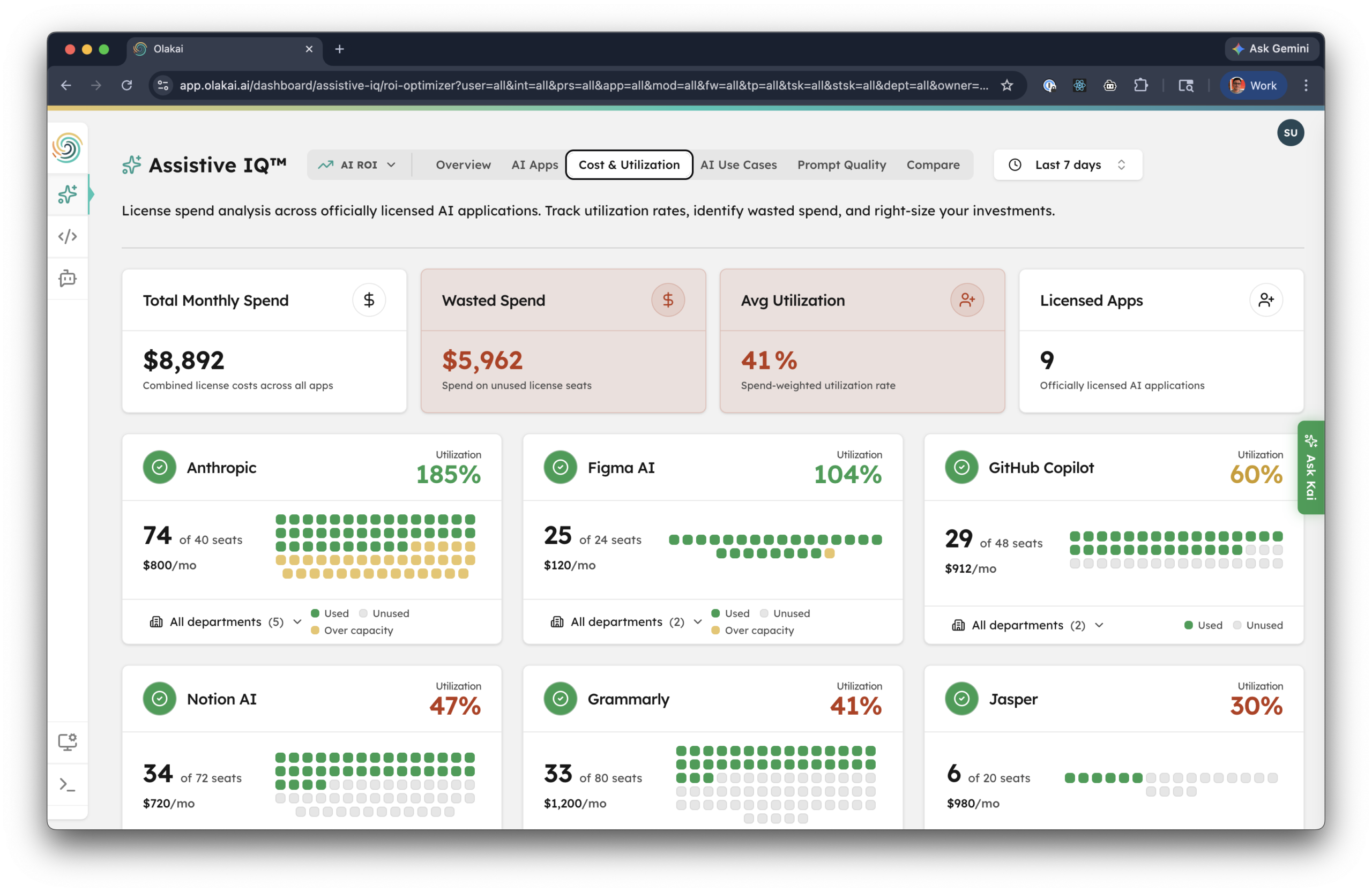

Look at the screenshot above for a minute. It’s a real view from an Assistive IQ deployment. Total monthly licensed AI spend: $8,892. Wasted spend on unused seats: $5,962. That’s 67% of the AI budget earning nothing, and it’s not an outlier. Some tools are running over capacity (Anthropic at 185% utilization, Figma AI at 104%, both strong signals to buy more seats or renegotiate). Others are drastically under (Jasper at 30%, Grammarly at 41%, both candidates for reclaim or consolidation). The same single view tells procurement where to buy more, where to cut, and where to do neither.

Now overlay that with the shadow AI data. The tools your people are reaching for on their own, that you aren’t paying for, are frequently the ones quietly delivering the most value. Meanwhile the licensed tools sitting at 30% utilization are the ones you’re defending in a budget review. That isn’t a failure of the employees. It’s a failure of visibility. When you can see both sides at once, the licensed spend that isn’t earning its keep and the shadow usage that represents real demand, procurement stops guessing. You reallocate budget from the dead seats to the tools your team is actually using, and you do it with numbers your CFO will accept.

Why this exercise matters right now

Every enterprise we talk to is sitting at some version of the same moment. AI spending has ramped faster than any other software category in a decade. Boards are asking for ROI numbers with a patience that’s running out. Regulators are writing compliance frameworks that will require audit-ready AI usage logs. Meanwhile, the people actually using AI in your company are miles ahead of the procurement process that’s supposed to govern them. The gap between those two things is growing by the week, and it’s becoming the single biggest source of hidden risk and hidden opportunity in enterprise IT.

Companies that close the gap this year will have a cleaner compliance story, a leaner AI budget, and a better read on which AI tools are actually working than their competitors. Companies that don’t will spend 2026 explaining what they don’t know about AI to boards that assumed they already knew it. The exercise of actually surfacing shadow AI, governing it, and feeding the data back into procurement is one of the highest-leverage things a CIO, CISO, or CFO can do in the next 90 days.

Your next step

Shadow AI isn’t going away. It’s the shape enterprise AI adoption takes before governance catches up. You can treat it as a threat and spend the next year trying to suppress it, or you can treat it as the most honest data you’ve ever had about how your people actually work, make procurement and governance decisions from that data, and turn the whole thing into an advantage.

If you want to see what shadow AI looks like inside your own organization, talk to an expert. We’ll show you what 48 hours of browser-level visibility reveals, and we’ll walk you through how the threat and the opportunity get resolved at the same time.