The Platform | Assistive IQ · Coding IQ · Agent IQ · Kai

Prove the ROI of every AI coding tool — across every team, every provider.

Engineering teams are spending real money on Claude Code, Cursor, Copilot, Windsurf, and OpenAI — but most can’t tell the board which tools are actually moving cycle time, which licenses are sitting idle, or whether the spend is paying off. Coding IQ measures it, end to end.

Every AI coding vendor sells you a dashboard. None of them tell you the truth.

Cursor shows you Cursor adoption. GitHub shows you Copilot acceptance rates. Anthropic shows you Claude Code spend. None of them connect that activity to the only number your CFO actually cares about — whether your engineers are shipping faster because of it.

Coding IQ sits above every provider, reads PR data directly from GitHub, and gives engineering leaders one answer to one question: is this working?

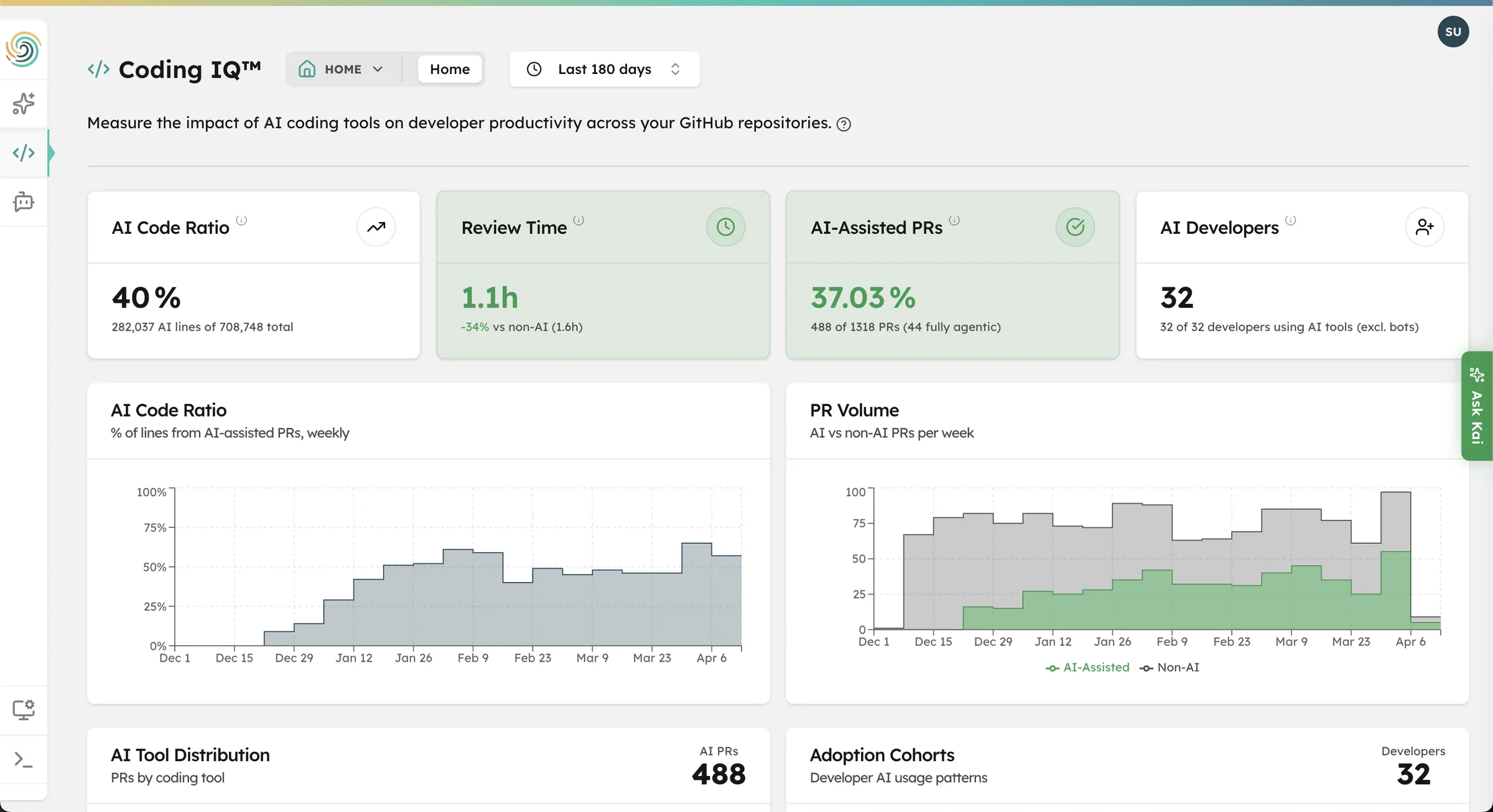

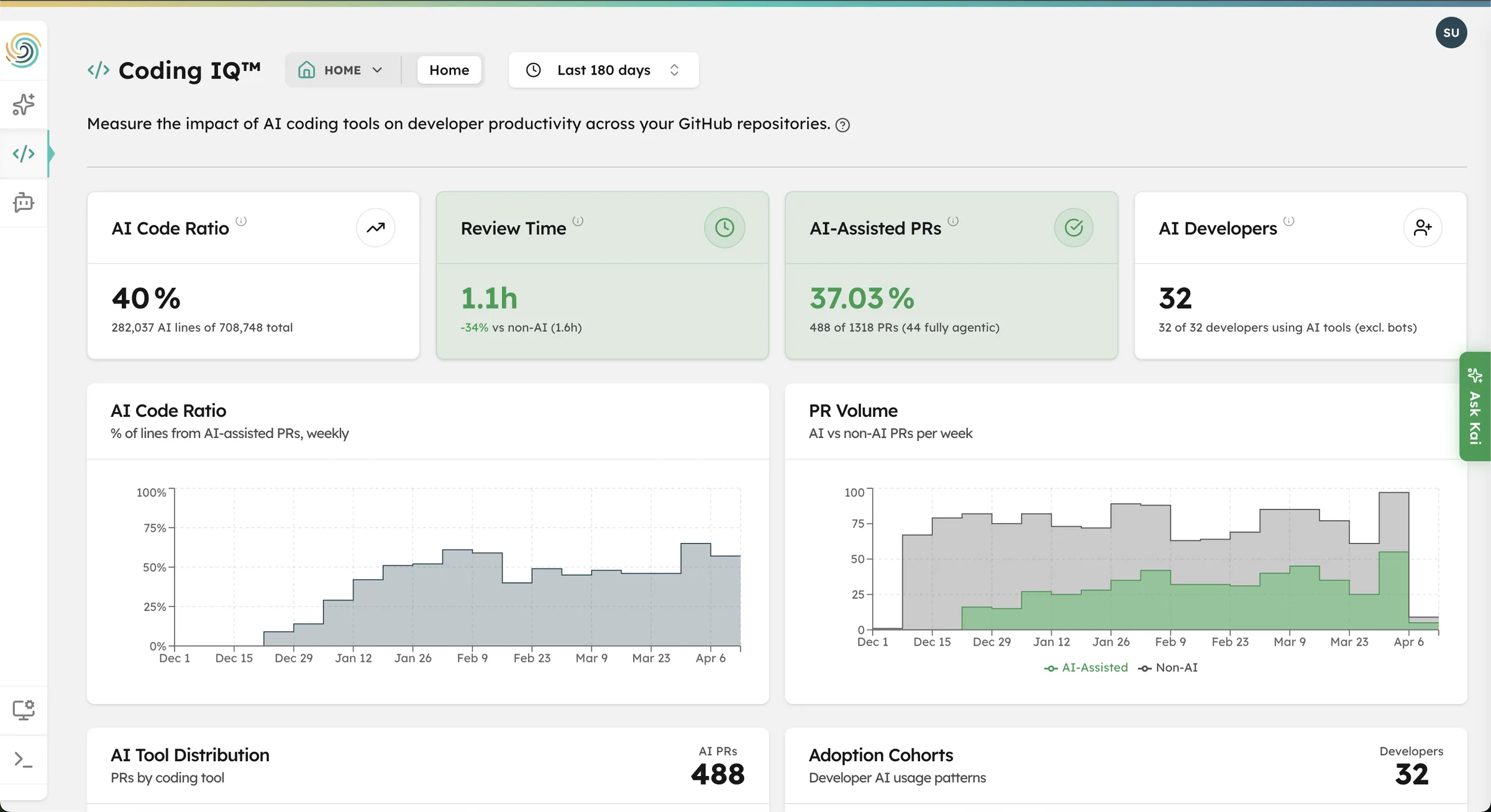

What Coding IQ measures

Cycle time, with and without AI

Compare coding time, review time, and total cycle time for AI-assisted PRs vs. non-AI PRs across every repo and team. AI-assisted PRs are typically 25–40% faster — Coding IQ tells you whether yours are.

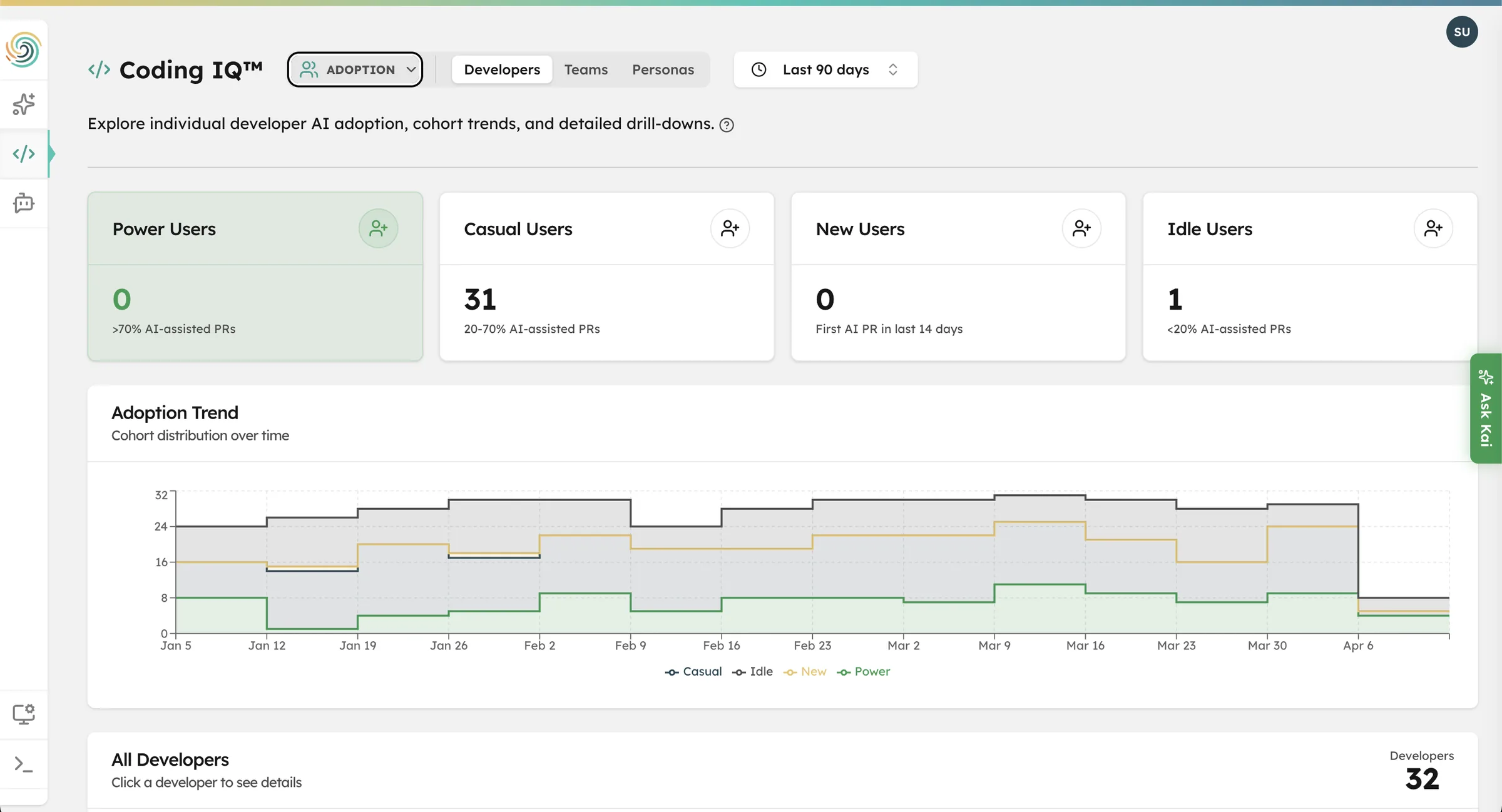

Adoption that’s actually real

Every developer is automatically segmented into a cohort: Power (>70% AI-assisted PRs), Casual (20–70%), New (first AI PR in the last 14 days), or Idle (<20%). See where licenses are paying off and where they're sitting on the shelf.

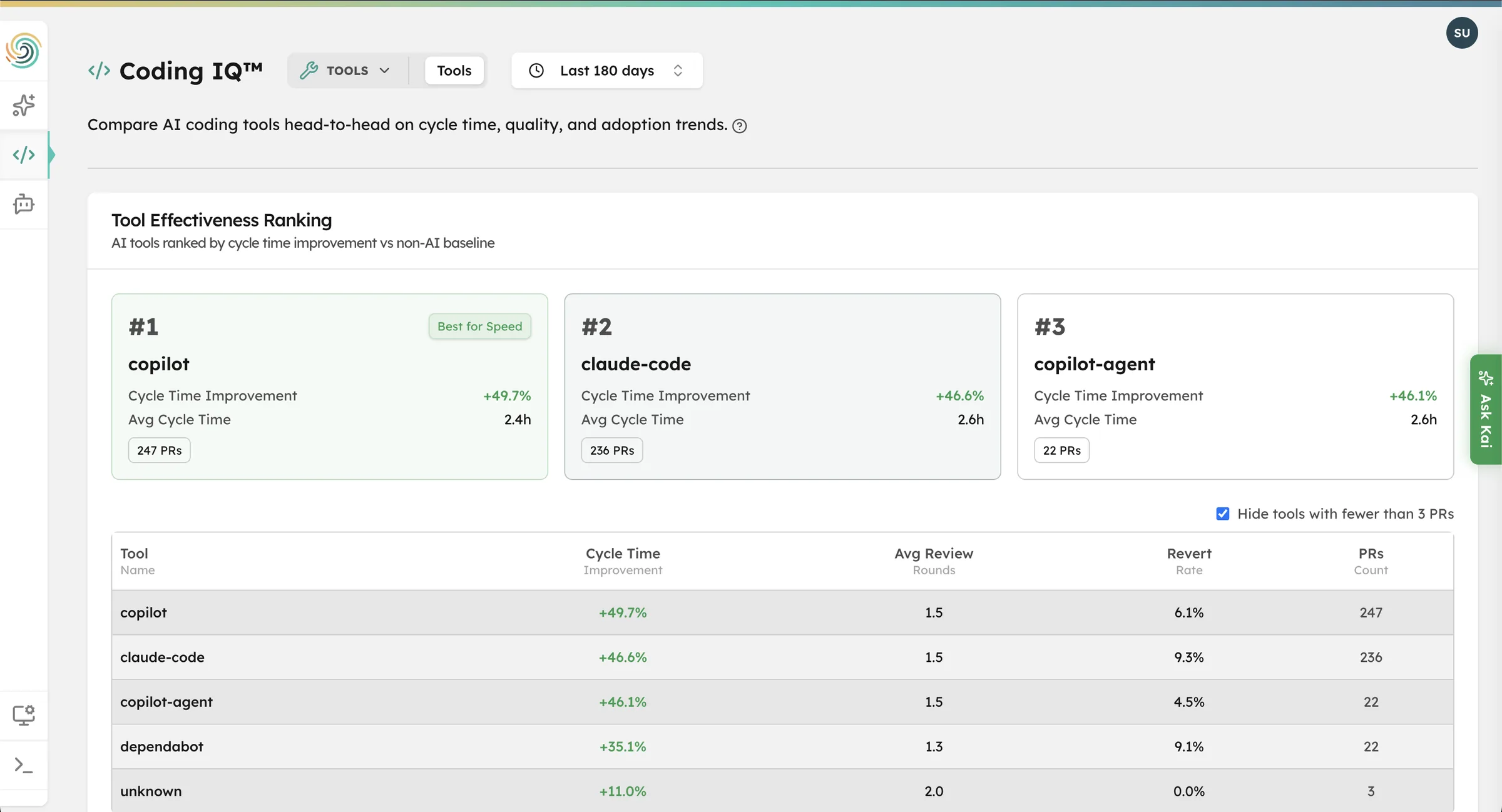

Cost per PR, by provider

Daily import of cost and usage data from Anthropic, Cursor, GitHub Copilot, Windsurf, and OpenAI. Divide by PRs produced. Compare across tools and teams. Stop guessing which provider deserves the budget next quarter.

PR Analysis

See exactly how much faster AI-assisted PRs ship

Coding IQ ingests PR data directly from your GitHub organization — no agent, no SDK to install. Every PR is automatically classified as AI-assisted or non-AI by reading commit co-author trailers, bot authorship, and PR body markers. Then it’s plotted against your historical cycle time so the delta is unambiguous.

- Coding time, review time, and total cycle time — AI vs non-AI

- PR volume and size distribution over time

- AI code ratio: % of merged lines that came from AI-assisted PRs

Developers

Find the idle licenses. Coach the casual users. Reward the power users.

Buying 200 Cursor seats doesn’t mean 200 developers are getting value from them. Coding IQ segments every developer in your org into one of four adoption cohorts and shows you which tools they actually use, how often, and what their cycle time looks like compared to peers.

The result: a precise list of who to enable, who to train, and where to reclaim spend.

Provider Cost Comparison

Vendor-neutral, side by side, every provider

Connect Anthropic, Cursor, GitHub Copilot, Windsurf, and OpenAI in Settings. Coding IQ pulls cost and usage daily, normalizes it, and shows you per-user spend, token breakdowns, acceptance rates, and trends — for every provider, in one view. No more spreadsheets. No more vendor-by-vendor procurement reviews.

Coding IQ doesn’t live in a silo.

It runs on the same platform as Agent IQ and Assistive IQ, so the engineering velocity story rolls up into the same enterprise AI ROI dashboard your CFO and CAIO are already looking at. And because everything flows through Kai, you can ask your AI program a question in plain English and get a reasoned answer in seconds:

“Which engineering team has the highest cycle-time delta from AI coding tools, and what’s it costing us per PR?”

Explore Coding IQ on your own terms.

Drop your work email below and we’ll send you a private link to a live Coding IQ environment — pre-loaded with realistic engineering data so you can click through AI adoption by team, cycle time deltas, and cost per PR across every provider at your own pace.

No account to create. No demo call to book. No commitment. If you want a guided walkthrough after, we’re one click away.