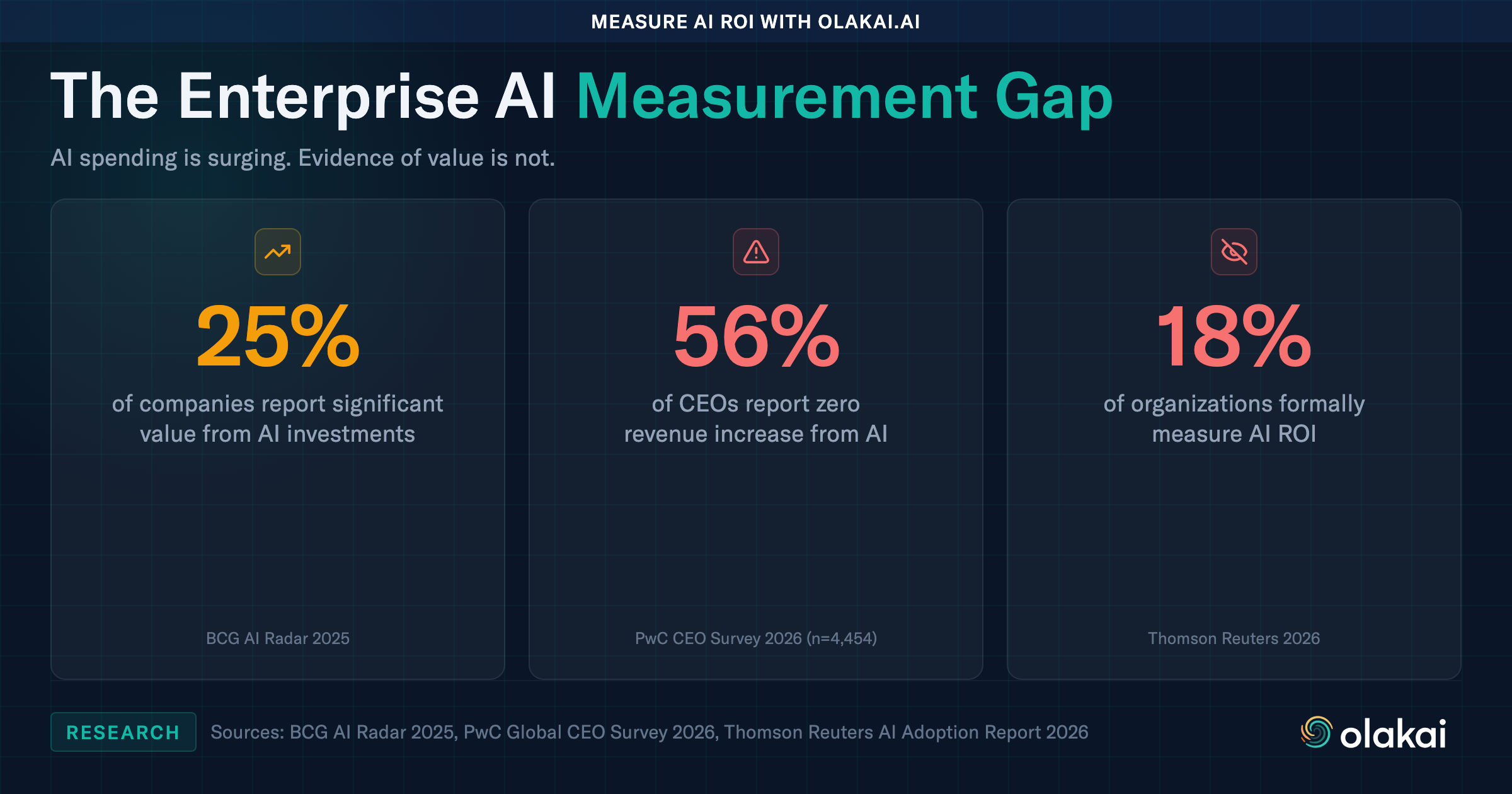

Gartner predicts that 40% of agentic AI projects will be canceled by 2027 due to unclear business value. BCG’s 2025 AI Radar survey of 1,803 C-suite executives found that only 25% of companies report realizing significant value from their AI investments. Thomson Reuters reported in 2026 that just 18% of organizations formally track AI ROI.

These are not isolated findings. They describe a structural gap in how enterprises manage AI: the gap between deploying AI and actually measuring whether it works. AI analytics is the discipline that closes that gap.

What Is AI Analytics?

AI analytics is the practice of measuring the usage, performance, cost, and business impact of artificial intelligence tools across an enterprise. It answers the questions that every CIO, CFO, and board member is now asking: What AI are we using? How much is it costing us? And what are we getting back?

Traditional business intelligence measures the outputs of human processes. AI analytics measures the outputs of AI-augmented and AI-automated processes. This includes everything from how often employees use a chatbot like ChatGPT or Copilot, to the success rate and cost-per-execution of autonomous agents running multi-step workflows in production.

The distinction matters because AI adoption has outpaced AI measurement by years. Most enterprises now have dozens of AI tools in active use, each with its own vendor dashboard or no analytics at all. AI analytics provides a unified, vendor-neutral view across all of them.

Why AI Analytics Matters Now

The urgency is driven by three converging forces.

The ROI reckoning. Deloitte’s State of AI 2026 survey of 3,235 business and IT leaders found that 74% of organizations want AI to grow revenue, but only 20% have actually seen it happen. PwC’s 2026 Global CEO Survey found that 56% of CEOs report no revenue increase from AI. Boards are no longer willing to fund AI programs on faith. They want numbers. AI analytics provides those numbers.

The agentic AI wave. Deloitte projects that agentic AI usage will surge from 23% to 74% of enterprises within two years. Unlike chatbots that wait for human prompts, agentic AI takes autonomous actions: executing workflows, calling APIs, making decisions. An ungoverned chatbot gives a bad answer. An ungoverned agent executes a bad decision at scale. Measuring agent performance is not optional. It is the difference between a controlled deployment and an operational risk.

The shadow AI problem. Employees are adopting AI tools faster than IT can track them. Shadow AI creates blind spots in security, compliance, and cost management. AI analytics starts with visibility: discovering what AI is actually being used, by whom, and for what purpose.

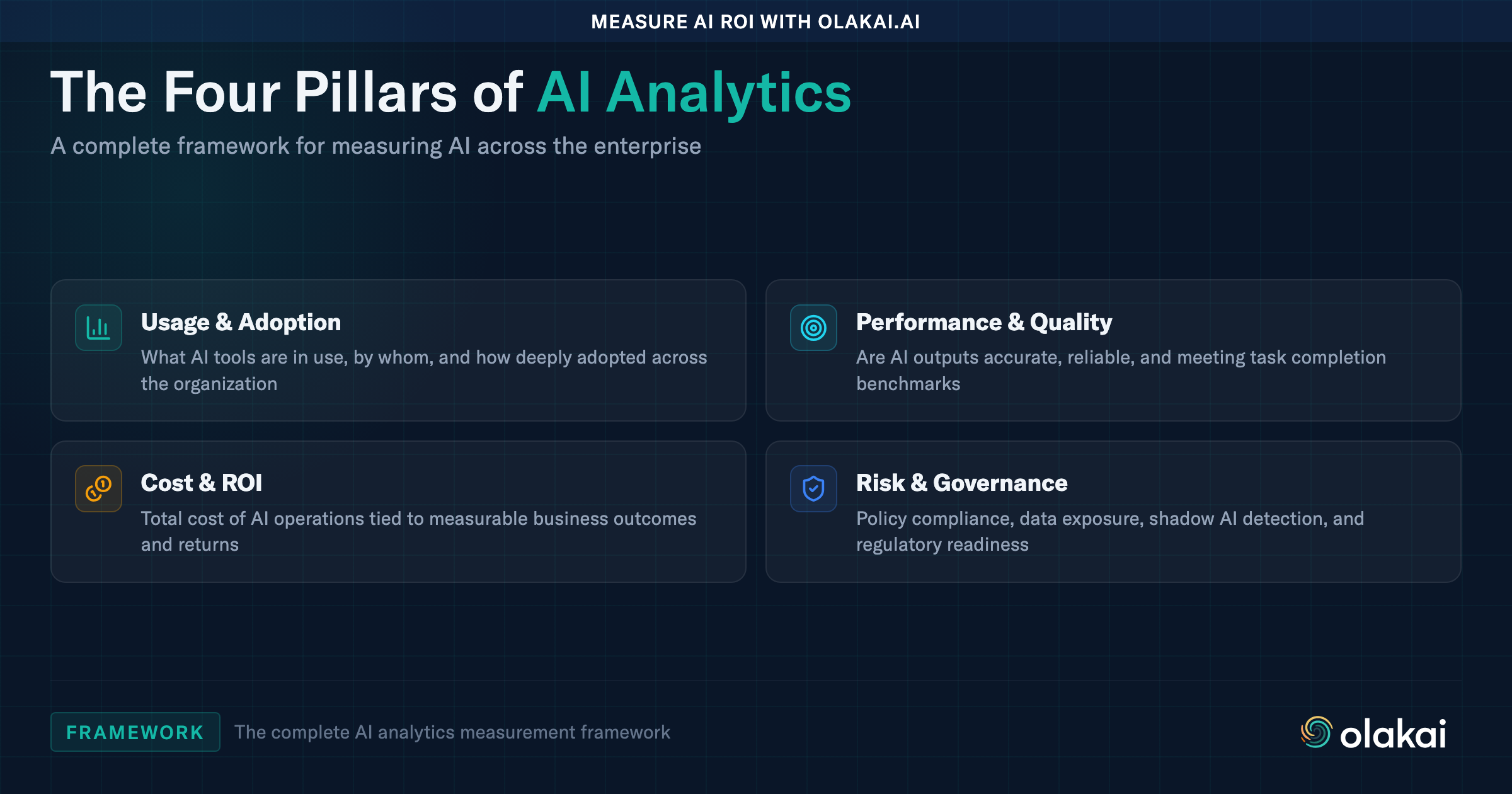

The Four Pillars of AI Analytics

A complete AI analytics practice spans four areas. Each one addresses a different question that enterprise leaders need answered.

1. Usage and Adoption Analytics

This is the foundation: understanding what AI tools are in use across the organization and how deeply they are being adopted. Usage analytics answers questions like: How many employees actively use ChatGPT? Which teams have adopted Copilot? What percentage of licensed AI tools are actually being used?

Without usage data, enterprises operate blind. They cannot optimize license spend because they do not know which tools are underutilized. They cannot identify shadow AI because they do not have a baseline of sanctioned usage to compare against. According to Deloitte, workforce access to sanctioned AI tools expanded from under 40% to roughly 60% of employees in a single year. That growth rate makes continuous usage tracking essential.

2. Performance and Quality Analytics

Beyond knowing that AI is being used, enterprises need to know whether it is performing well. Performance analytics measures the quality and reliability of AI outputs across tools and use cases.

For assistive AI (chatbots and copilots), this includes response accuracy, user satisfaction, and task completion rates. For agentic AI, it includes execution success rates, failure analysis, and decision quality. A custom agent that processes insurance claims might have a 94% success rate, but the 6% failure rate could represent millions in incorrectly handled claims. Performance analytics surfaces these patterns before they become problems.

3. Cost and ROI Analytics

This is where AI analytics becomes strategic. Cost analytics tracks the total cost of AI operations: API calls, compute, licensing, and human oversight time. ROI analytics ties those costs to business outcomes: revenue influenced, time saved, cost avoided, error reduction.

BCG found that 60% of enterprises do not track financial KPIs for their AI programs. This means the majority of organizations cannot answer the most basic question their CFO will ask: Is our AI investment paying off? AI ROI measurement is the capability that separates enterprises scaling AI from those stuck in pilot purgatory.

The math is straightforward but requires instrumentation. If a customer service AI handles 10,000 tickets per month at $0.12 per interaction and replaces a process that previously cost $8.50 per ticket with human agents, the monthly savings are $83,800. Without AI analytics, that number is an estimate. With it, that number is auditable and provable to a board.

4. Risk and Governance Analytics

The fourth pillar connects analytics to governance. Risk analytics monitors AI usage for policy violations, data exposure, bias indicators, and compliance gaps. It answers questions like: Are employees sharing sensitive data with AI tools? Are autonomous agents operating within defined guardrails? Are AI outputs meeting regulatory requirements?

This pillar is increasingly non-negotiable. The EU AI Act mandates risk-based oversight. The NIST AI Risk Management Framework provides voluntary guidance that is rapidly becoming the de facto standard in the United States. Companies in regulated industries such as financial services, healthcare, and government cannot scale AI without demonstrating continuous risk monitoring.

AI Analytics vs. Traditional Observability

Engineering teams are familiar with observability tools like Datadog, New Relic, and Splunk. These tools monitor infrastructure: server uptime, latency, error rates, and throughput. They are necessary but insufficient for AI programs.

AI analytics differs from traditional observability in three fundamental ways.

It measures business outcomes, not just technical metrics. Datadog can tell you that an API call to GPT-4 took 1.2 seconds. AI analytics tells you that the same call saved a sales rep 14 minutes of research and contributed to a deal worth $240,000. The audience is the CIO and CFO, not only the engineering team.

It spans tools and vendors. Each AI vendor provides metrics for its own tool. Microsoft shows Copilot usage. OpenAI shows ChatGPT usage. Salesforce shows Einstein usage. But no vendor will ever show you the cross-vendor picture, because that is not in their interest. AI analytics provides vendor-neutral visibility across the entire AI ecosystem.

It connects usage to governance. Traditional observability does not care whether an employee pasted customer PII into a chatbot. AI analytics does. The integration of usage data, risk signals, and governance policy into a single platform is what makes AI analytics a strategic capability rather than just another dashboard.

What to Measure: Key AI Analytics Metrics

The specific metrics that matter depend on the type of AI being measured and the audience consuming the data. Here is a framework organized by stakeholder.

For the CIO and Board

- AI ROI by business unit: Revenue influenced, cost saved, and time recovered, broken down by department or function

- Adoption rate: Percentage of employees actively using AI tools, tracked over time

- AI maturity score: A composite metric reflecting how effectively the organization uses AI across adoption, measurement, and governance

- Risk posture: Number and severity of policy violations, shadow AI instances, and compliance gaps

For the CFO

- Total cost of AI: All-in spend across licensing, API usage, compute, and personnel

- Cost per AI interaction: What each chatbot conversation, agent execution, or copilot suggestion costs

- License utilization: Percentage of paid AI licenses that are actively used. Low utilization signals wasted spend.

- ROI by AI initiative: For each major AI program, what is the measurable return relative to the investment?

For the CISO

- Shadow AI inventory: Unauthorized AI tools in use, how many users, what data they access

- Data exposure incidents: Instances of sensitive data shared with AI tools

- Policy compliance rate: Percentage of AI interactions that comply with content and data policies

- Agent guardrail adherence: For autonomous agents, how often do they operate within defined boundaries?

For Engineering and AI Teams

- Agent success rate: Percentage of agent executions that complete successfully

- Latency and throughput: Response times and processing capacity

- Error classification: Types and frequency of AI failures, broken down by cause

- Model comparison: Performance and cost differences across AI models and vendors for the same task

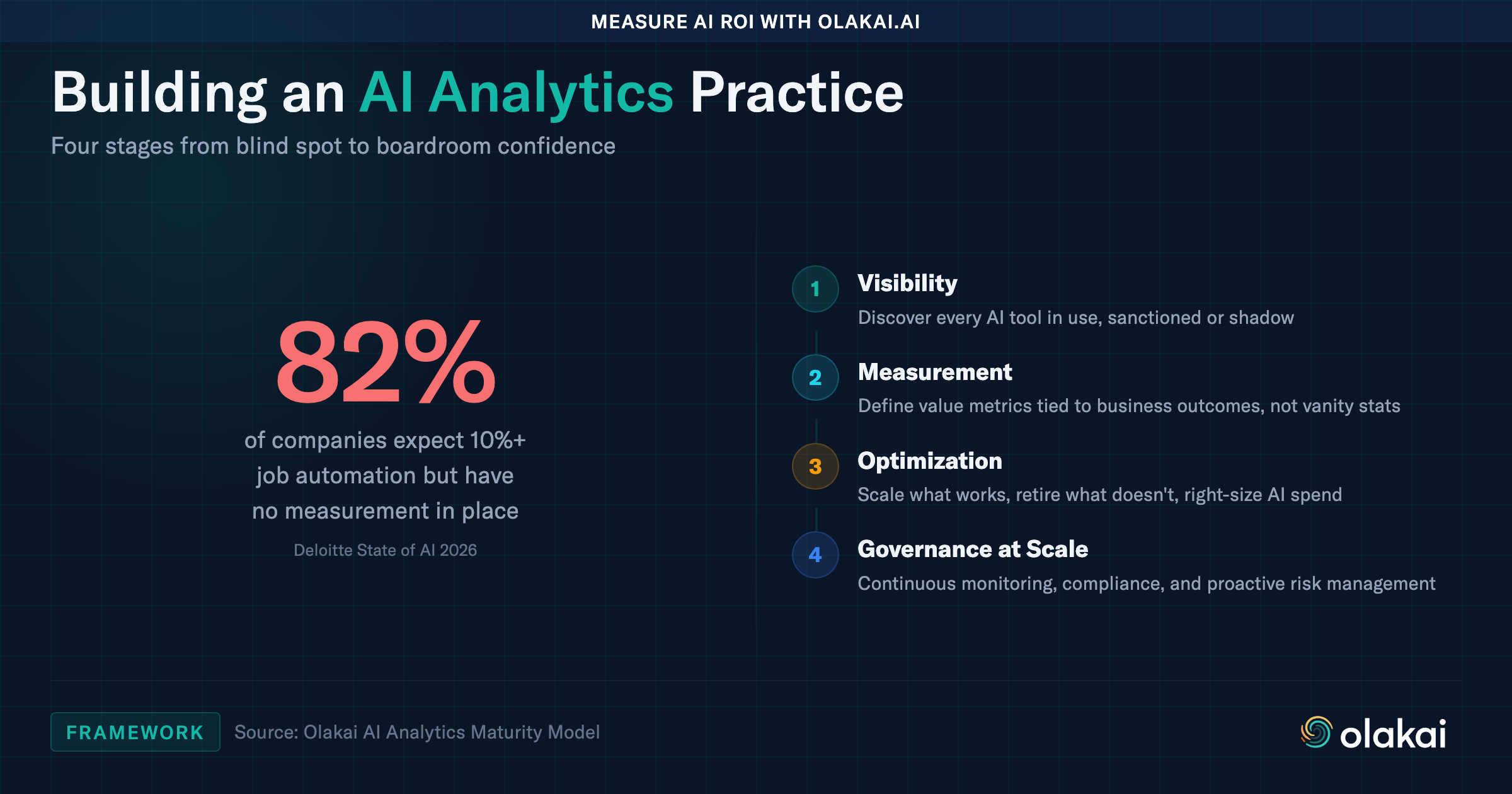

How to Build an AI Analytics Practice

Organizations typically progress through four stages when building an AI analytics capability. Understanding where you are today helps determine the right next step.

Stage 1: Visibility

The first step is simply knowing what AI is in use. Most enterprises are surprised by the results of an AI visibility audit. Shadow AI is nearly universal: employees are using AI tools that IT has not sanctioned, often with company data. Stage 1 focuses on discovery and inventory: building a complete picture of the AI tools, users, and data flows across the organization.

Stage 2: Measurement

Once you have visibility, you can start measuring. This means defining the metrics that matter for each AI initiative and instrumenting systems to capture them. The key shift at this stage is moving from vanity metrics (number of prompts, number of users) to value metrics (time saved, revenue influenced, cost avoided). Olakai’s SEE, MEASURE, DECIDE, ACT framework provides a structured approach to this transition.

Stage 3: Optimization

With measurement in place, enterprises can make data-driven decisions about their AI programs. Which tools deliver the highest ROI? Which pilots should scale to production? Which agents should be retired? Structured pilot programs with clear success criteria replace the ad hoc experimentation that traps most organizations in pilot purgatory. Optimization also includes cost management: identifying redundant tools, right-sizing API usage, and negotiating vendor contracts with actual usage data.

Stage 4: Governance at Scale

The final stage integrates analytics with governance. As AI programs grow from a handful of pilots to hundreds of production deployments, the analytics framework must support policy enforcement, compliance reporting, and risk management at scale. This is where organizations move from reactive oversight (responding to incidents) to proactive governance (preventing them). Analytics provides the continuous monitoring that makes proactive governance possible.

The Vendor-Neutral Imperative

One of the most common mistakes enterprises make is relying on AI vendors to provide their own analytics. Microsoft offers Copilot usage dashboards. OpenAI offers a usage portal for ChatGPT Enterprise. Salesforce shows Einstein adoption metrics. Each provides useful data about its own tool. None will ever provide the cross-vendor picture.

This is not a criticism of those vendors. It is a structural limitation. Microsoft has no incentive to show you that a competitor’s tool outperforms Copilot for a given use case. OpenAI has no incentive to help you discover that your team stopped using ChatGPT and switched to Claude. The only way to get an honest, complete picture of AI performance across your organization is through a vendor-neutral analytics platform that sits above individual tools.

Olakai was built specifically for this purpose. The platform provides unified visibility across chatbots, copilots, agents, and AI-enabled SaaS, with custom KPIs tied to business outcomes rather than vendor-specific metrics.

Frequently Asked Questions

What is the difference between AI analytics and AI observability?

AI observability focuses on the technical performance of AI systems: latency, error rates, model accuracy, and infrastructure health. AI analytics extends beyond technical metrics to include business outcomes, ROI measurement, cost analysis, and governance. Observability tells you whether the system is running. Analytics tells you whether it is delivering value.

How do you measure AI ROI?

AI ROI is measured by comparing the total cost of an AI initiative (licensing, compute, API calls, implementation, and human oversight) against the measurable business value it creates (time saved, revenue influenced, cost avoided, error reduction). The key is instrumenting AI systems to capture both sides of this equation continuously, not just during quarterly reviews. Olakai’s AI ROI measurement capability automates this process across all AI tools.

What is shadow AI and why does it matter for analytics?

Shadow AI refers to AI tools used by employees without IT approval or oversight. It matters for analytics because you cannot measure what you cannot see. If 30% of your AI usage is happening in unsanctioned tools, your analytics are incomplete, your cost estimates are wrong, and your security posture has blind spots. Shadow AI detection is typically the first step in building an AI analytics practice.

Do you need a dedicated platform for AI analytics?

For organizations with one or two AI tools, vendor-provided dashboards may suffice. For enterprises using multiple AI tools across multiple teams, vendor dashboards create fragmented, siloed views. A dedicated AI analytics platform provides the unified, vendor-neutral perspective needed to make strategic decisions about the AI program as a whole, not just individual tools in isolation.

What industries benefit most from AI analytics?

Every industry deploying AI at scale benefits from analytics, but the urgency is highest in regulated industries. Financial services, healthcare, and government face regulatory requirements that demand continuous monitoring and audit-ready evidence. Technology companies benefit from the ROI optimization angle: understanding which AI investments deliver the highest return.

Key Takeaways

- AI analytics is the practice of measuring AI usage, performance, cost, and business impact across an enterprise

- Only 25% of companies report significant value from AI (BCG), and only 18% formally track AI ROI (Thomson Reuters). The measurement gap is the primary barrier to scaling AI programs.

- The four pillars are usage analytics, performance analytics, cost and ROI analytics, and risk and governance analytics

- AI analytics differs from traditional observability by measuring business outcomes, spanning vendors, and integrating governance

- Vendor-neutral analytics is essential because no AI vendor will provide an honest cross-vendor picture

- Building an AI analytics practice follows four stages: visibility, measurement, optimization, and governance at scale

Schedule a demo to see how Olakai provides vendor-neutral AI analytics across your entire AI ecosystem.