Your engineering team just shipped 10,000 lines of code this sprint. Nearly half of it was written by AI. Do you know which half — and whether it was any good?

This isn’t a theoretical question anymore. According to the 2025 DORA Report, almost half of companies now have at least 50% AI-generated code, up from just 20% at the start of 2025. Ninety percent of engineering teams now use AI coding tools in their workflows. Cursor crossed $2 billion in annualized revenue by February 2026. Claude Code hit $2.5 billion. GitHub Copilot remains embedded in enterprises worldwide. The adoption question is settled.

The measurement question is not.

The Measurement Gap Nobody Talks About

Here’s what most engineering leaders are tracking: lines of code generated, completion acceptance rates, developer satisfaction surveys, and seat utilization. These are vanity metrics. They tell you that developers are using the tools. They don’t tell you whether the tools are making your organization better.

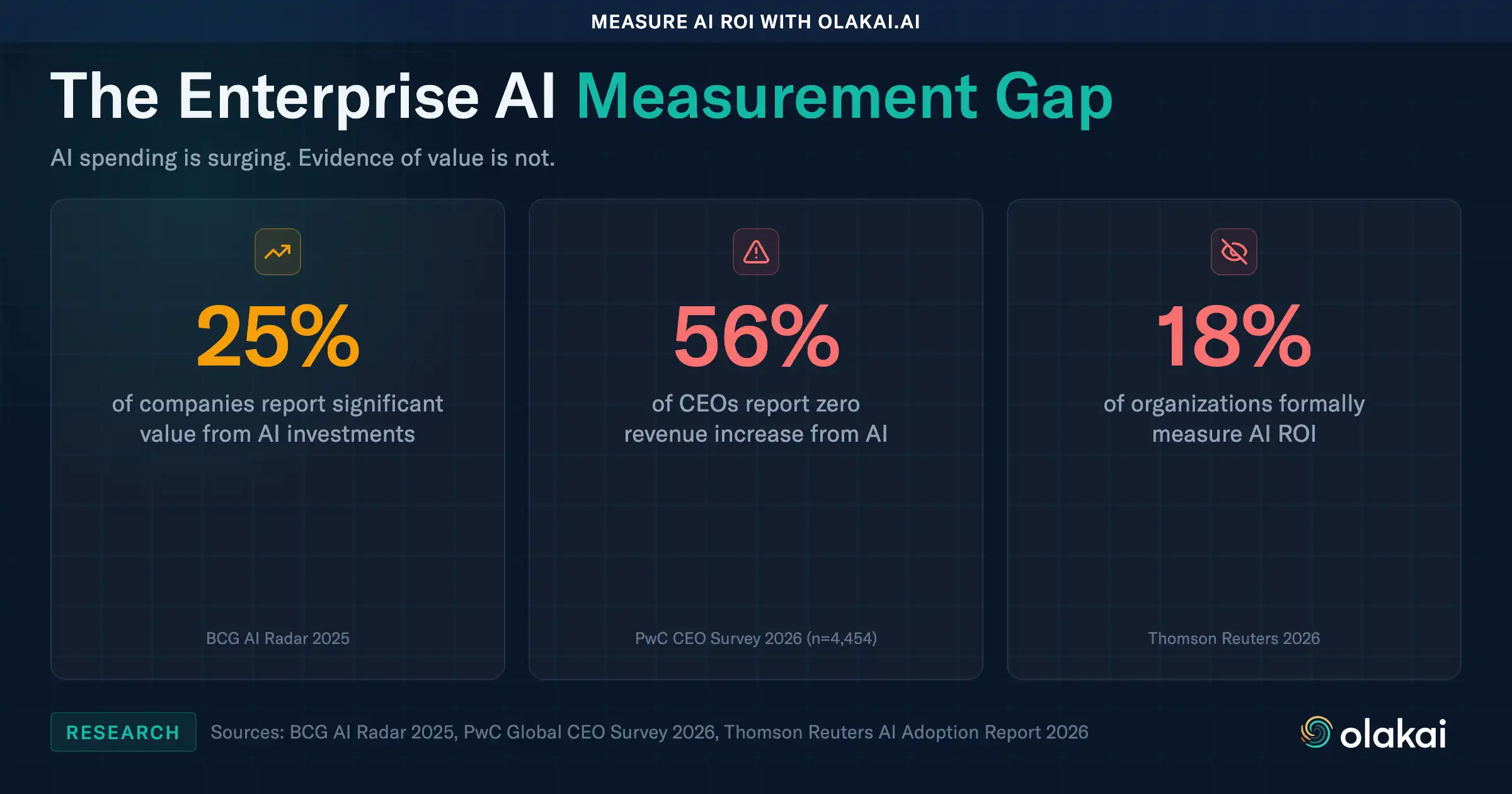

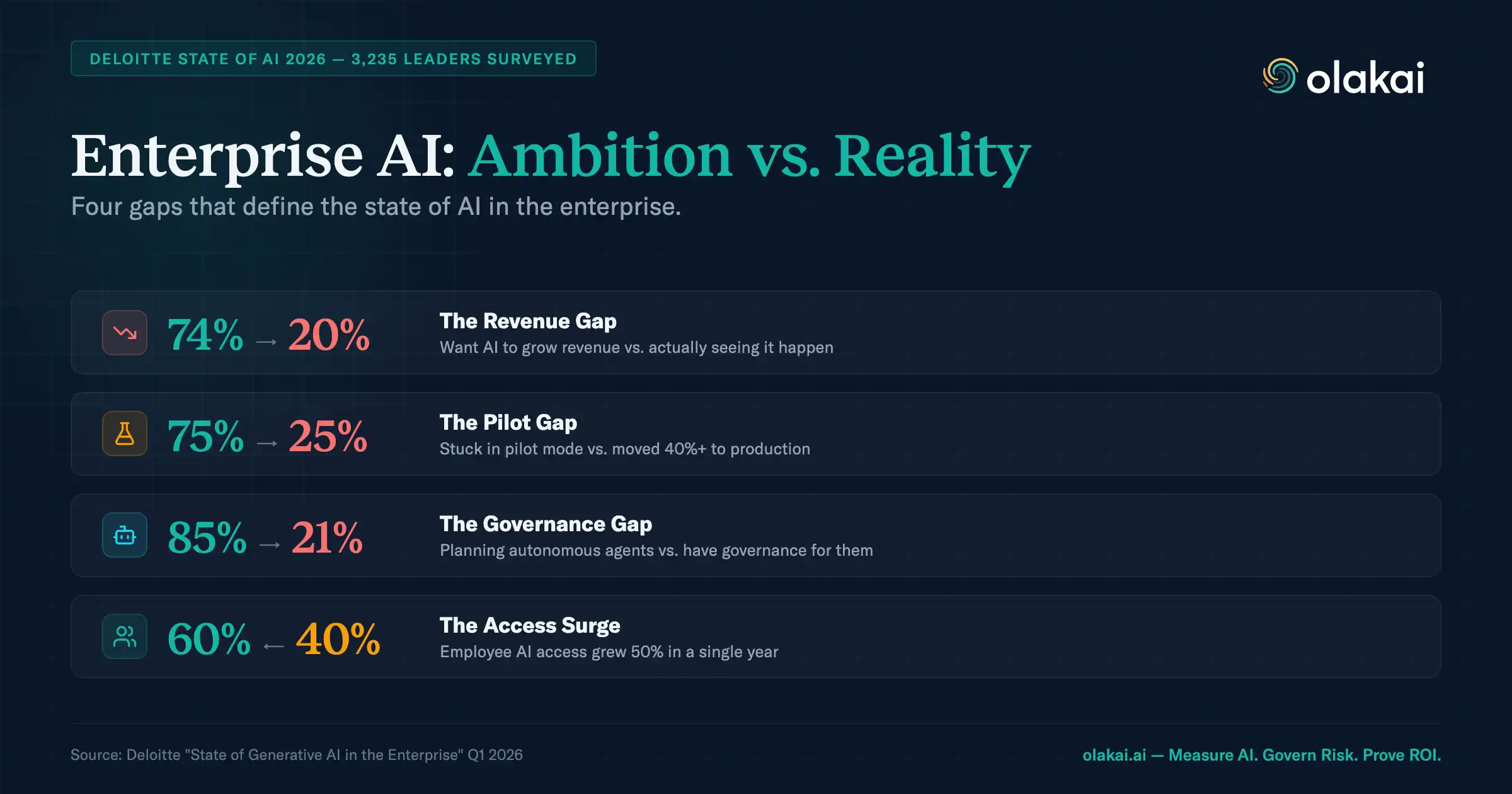

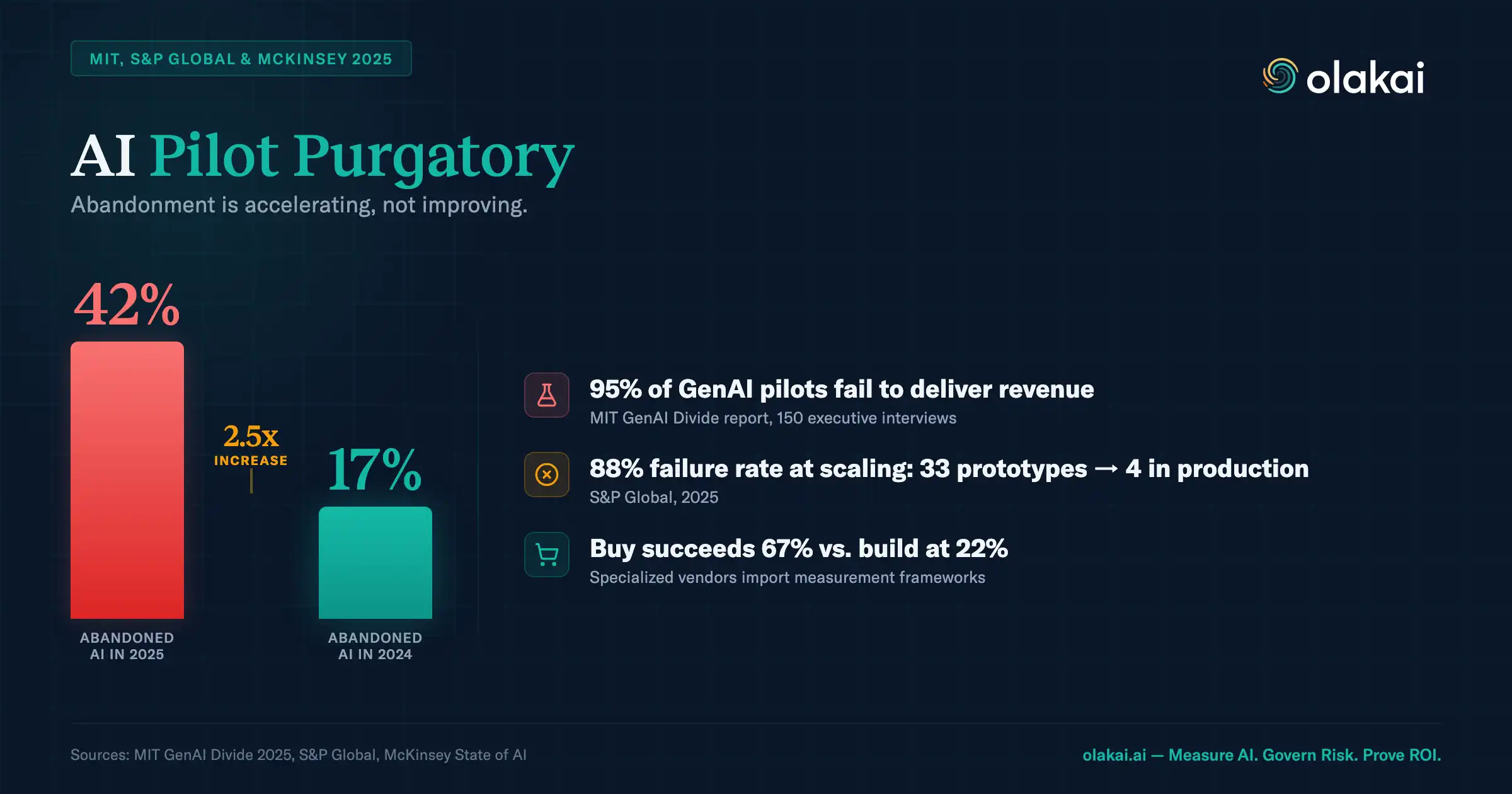

BCG found that 60% of companies have no defined financial KPIs for their AI initiatives — they’re counting pilots, celebrating deployments, and measuring model accuracy instead of actual business value. Bain’s 2025 Technology Report went further, finding that AI coding tools deliver only 10 to 15 percent productivity gains despite adoption by two-thirds of software firms. That’s a fraction of the 10x improvement vendors promised.

The gap between what companies measure and what actually matters is where millions disappear. Your board isn’t asking how many code completions your team accepted last quarter. They’re asking whether your $1.2 million in AI coding tool licenses is making your engineering organization faster, safer, and more competitive. If you can’t answer that question with data, you have a measurement problem — not a productivity problem.

What You Should Be Measuring Instead

The metrics that matter for AI coding tools aren’t about the tools themselves. They’re about what happens after the code ships.

Cycle time delta. How much faster do AI-assisted pull requests move from first commit to production compared to non-AI pull requests? This is the clearest signal of real productivity gain. Early data suggests AI-assisted PRs are 25 to 40 percent faster through the pipeline, but this varies wildly by team, codebase complexity, and tool. If you aren’t measuring the delta, you’re guessing.

Incident rate on AI-authored code. A Stanford study cited by CIO.com found that participants using coding assistants wrote less secure code in 80% of tasks — yet were 3.5 times more likely to believe their code was secure. That confidence gap is dangerous. If your AI-generated code is creating more production incidents, more security vulnerabilities, or more hotfixes, the productivity gains are illusory. You need to track post-deployment quality by code origin.

Cost per pull request by provider. Your team is probably using three or four AI coding tools simultaneously — Copilot on some repos, Cursor on others, Claude Code for complex refactors. Each has different pricing, different token consumption patterns, and different value profiles. Without a unified cost-per-PR metric across providers, you can’t make rational decisions about which tools to standardize and which licenses are going unused.

Deployment frequency. The DORA framework remains the gold standard for engineering performance, but AI introduces a wrinkle. Deployment frequency may rise slightly while lead times increase as review cycles grow longer to accommodate AI-generated code. Measuring deployment frequency in isolation misses this dynamic. You need to track it alongside review time and change failure rate to see the full picture.

The Shadow Coding Problem

There’s another dimension most CTOs haven’t confronted: developers using personal accounts for AI coding tools that your organization doesn’t manage, monitor, or govern.

A developer signs up for Cursor with a personal email. Another uses Claude Code through a personal API key. A third is running a locally hosted model for code generation. None of these show up in your IT asset inventory. None are covered by your data handling policies. And all of them are processing your proprietary source code through systems you don’t control.

This is shadow AI in the codebase — and it’s arguably more dangerous than shadow AI in other parts of the organization because the outputs become permanent parts of your software. Code generated through ungoverned tools gets committed, reviewed, merged, and deployed. It becomes your product. If that code was generated using a model that trained on GPL-licensed code, or if proprietary algorithms were sent to a third-party API without appropriate data handling agreements, the liability sits with your organization — not the developer.

According to HiddenLayer’s 2026 AI Threat Landscape Report, 76% of organizations now cite shadow AI as a definite or probable problem, a 15-point jump from the prior year. For engineering organizations, the stakes are uniquely high because the shadow doesn’t just create risk — it becomes part of the product.

The Adoption Cohort Blindspot

Aggregate metrics hide critical patterns. When engineering leaders report that “our team has 70% AI adoption,” they’re averaging over a distribution that looks nothing like a uniform curve.

In practice, adoption breaks into cohorts. Power users — developers with more than 70% of their pull requests AI-assisted — are producing dramatically different work than casual users at 20 to 40 percent. New adopters who started using AI tools within the past two weeks have different needs than idle users who tried a tool once and stopped. Each cohort requires different support, different training, and different expectations.

Without cohort-level visibility, you can’t identify which developers are getting genuine value, which ones need enablement, and which expensive licenses are sitting unused. You also can’t detect the productivity paradox that multiple studies have now documented: developers predict a 24% speedup from AI tools but some studies have measured a 19% slowdown, while those same developers still report a 20% perceived improvement afterward. The gap between perception and measurement is real, and only cohort-level data can surface it.

What the Competitors Miss

Engineering analytics platforms like Jellyfish have built impressive capabilities for measuring developer productivity. They can track DORA metrics, analyze PR throughput, and benchmark teams against each other. But they were built before AI coding became the default mode of software development, and their architecture reflects that.

Most engineering analytics tools work from metadata — commit timestamps, PR merge events, Jira ticket transitions. They can tell you that a developer merged 12 PRs this week. They can’t tell you which of those PRs were AI-assisted, what tool was used, how much it cost, or whether the AI-generated portions introduced quality issues. Without code-level detection that identifies AI co-author trailers, bot PR authors, and tool-specific markers, the attribution problem remains unsolvable.

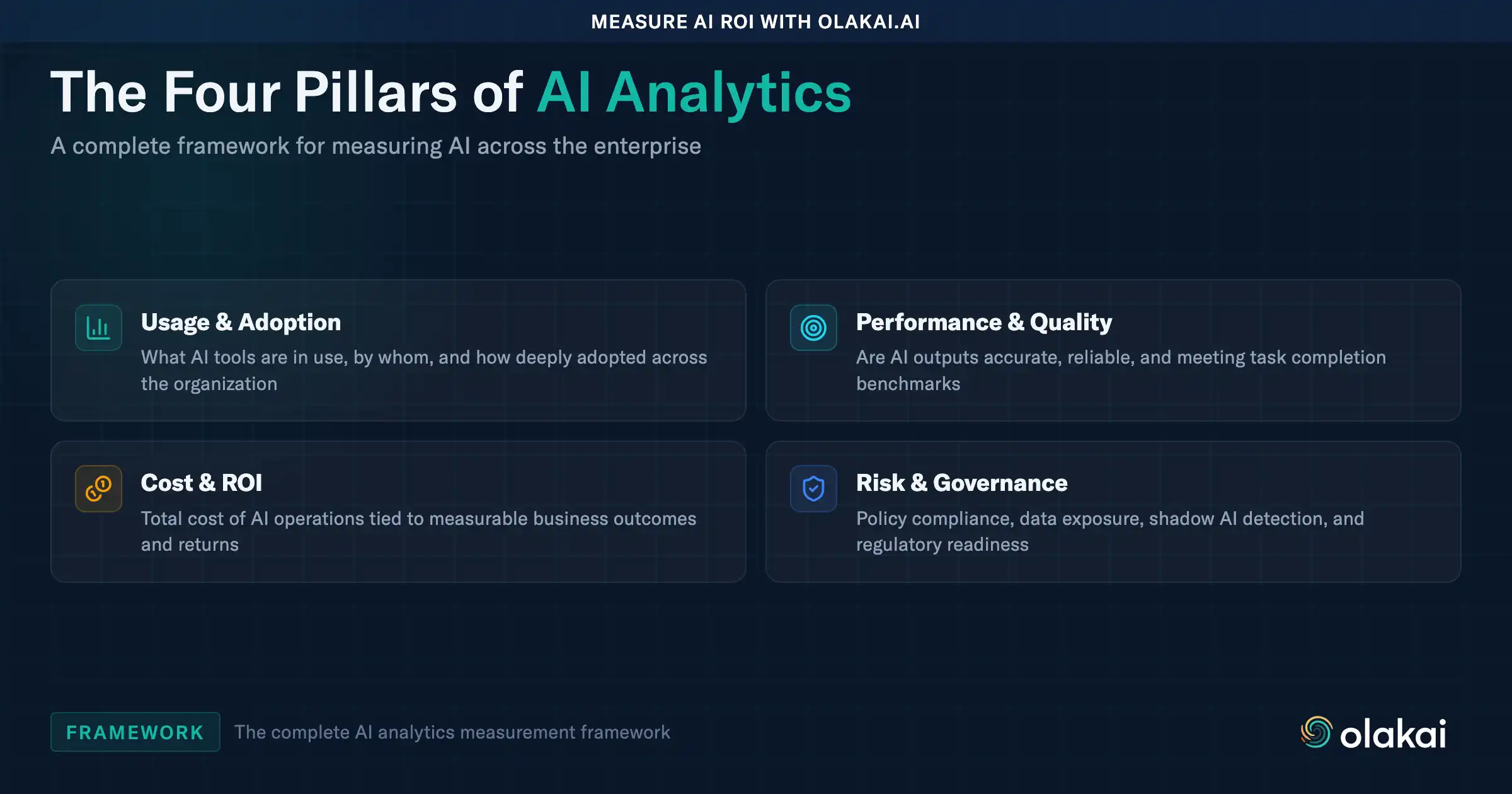

Then there’s the governance dimension. Your CISO needs to know which AI tools are processing your source code and whether they comply with your data handling policies. Your CFO needs to know the total cost across all AI coding providers, not just the ones IT provisioned. Your compliance team needs an audit trail showing what code was AI-generated and by which model. Productivity analytics tools don’t cover any of this.

The measurement gap isn’t just about better dashboards. It’s about connecting AI ROI measurement with governance, cost control, and security in a single view — the same way organizations learned to manage cloud infrastructure by combining performance monitoring with cost optimization and compliance controls.

Building the Framework

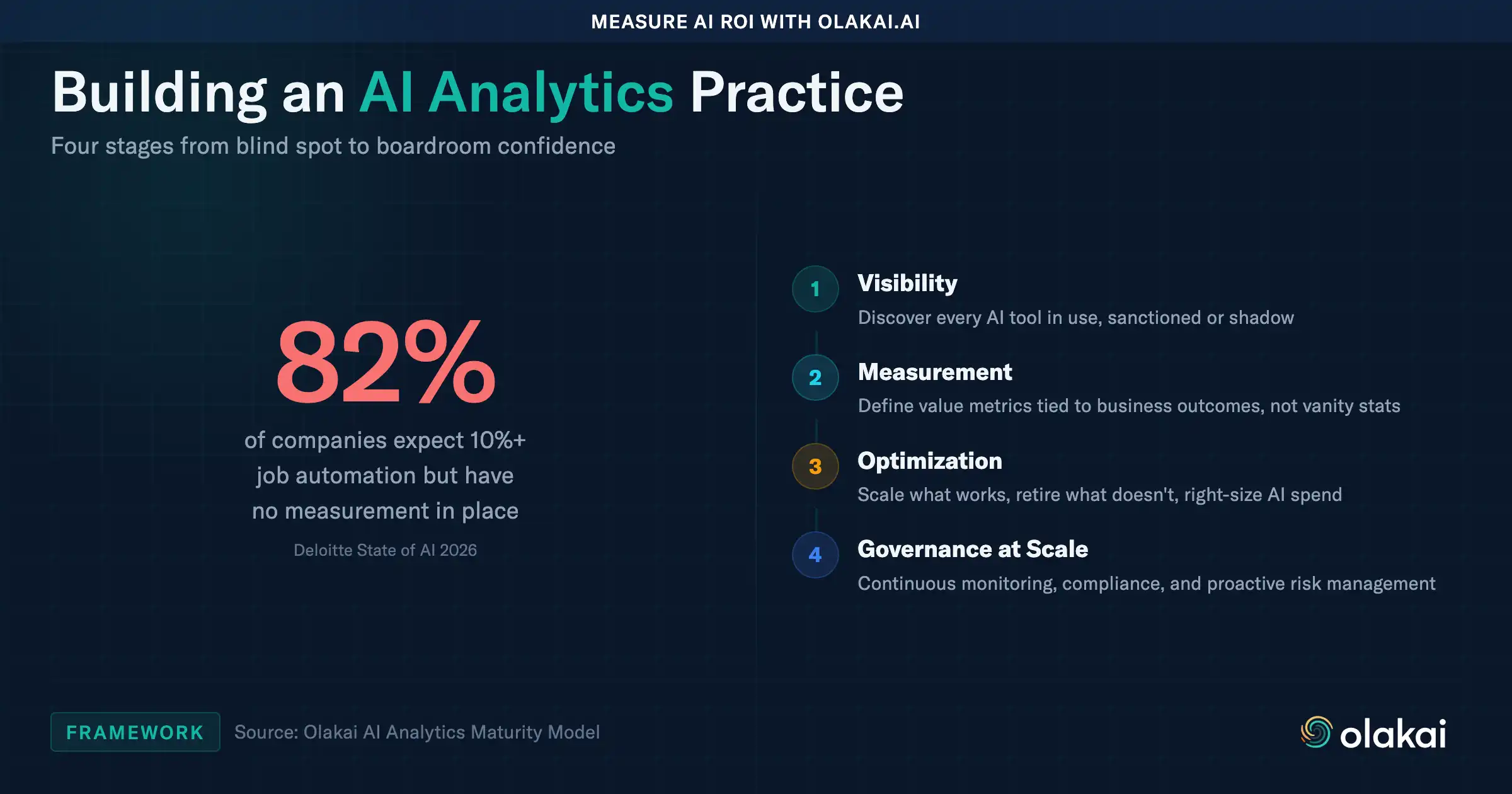

If you’re spending six or seven figures on AI coding tools and can’t answer basic questions about their impact, here’s where to start.

First, establish a baseline. Before you can measure improvement, you need to know where you stand. What percentage of your pull requests are AI-assisted? What’s your current cycle time for AI-assisted versus non-AI code? What are you spending per developer, per provider, per month? Most engineering organizations can’t answer these questions today.

Second, segment by cohort. Stop reporting a single adoption number. Break your engineering organization into power users, casual users, new adopters, and idle license holders. Each cohort tells a different story, and each requires a different response.

Third, connect quality to origin. Track incident rates, security findings, and change failure rates by whether the code was AI-assisted or not. This is the data your board actually needs — not how many lines the AI generated, but whether those lines made your product better or worse.

Fourth, unify cost visibility. Aggregate spending across Copilot, Cursor, Claude Code, and every other tool your developers are using — including the ones they’re paying for themselves. The enterprise AI revenue gap starts with cost sprawl that nobody can see.

The organizations that will win the AI coding race aren’t the ones that adopt the most tools. They’re the ones that measure the right things, govern the risks, and make data-driven decisions about where to invest. Your AI coding tools are generating code. The question is whether they’re generating value.

Want to see how your engineering AI investment is actually performing? Schedule a demo to see Coding IQ in action — vendor-neutral analytics across every AI coding tool your team uses.